Introduction to Statistical Thinking

Chapter 16 case studies, 16.1 student learning objective.

This chapter concludes this book. We start with a short review of the topics that were discussed in the second part of the book, the part that dealt with statistical inference. The main part of the chapter involves the statistical analysis of 2 case studies. The tools that will be used for the analysis are those that were discussed in the book. We close this chapter and this book with some concluding remarks. By the end of this chapter, the student should be able to:

Review the concepts and methods for statistical inference that were presented in the second part of the book.

Apply these methods to requirements of the analysis of real data.

Develop a resolve to learn more statistics.

16.2 A Review

The second part of the book dealt with statistical inference; the science of making general statement on an entire population on the basis of data from a sample. The basis for the statements are theoretical models that produce the sampling distribution. Procedures for making the inference are evaluated based on their properties in the context of this sampling distribution. Procedures with desirable properties are applied to the data. One may attach to the output of this application summaries that describe these theoretical properties.

In particular, we dealt with two forms of making inference. One form was estimation and the other was hypothesis testing. The goal in estimation is to determine the value of a parameter in the population. Point estimates or confidence intervals may be used in order to fulfill this goal. The properties of point estimators may be assessed using the mean square error (MSE) and the properties of the confidence interval may be assessed using the confidence level.

The target in hypotheses testing is to decide between two competing hypothesis. These hypotheses are formulated in terms of population parameters. The decision rule is called a statistical test and is constructed with the aid of a test statistic and a rejection region. The default hypothesis among the two, is rejected if the test statistic falls in the rejection region. The major property a test must possess is a bound on the probability of a Type I error, the probability of erroneously rejecting the null hypothesis. This restriction is called the significance level of the test. A test may also be assessed in terms of it’s statistical power, the probability of rightfully rejecting the null hypothesis.

Estimation and testing were applied in the context of single measurements and for the investigation of the relations between a pair of measurements. For single measurements we considered both numeric variables and factors. For numeric variables one may attempt to conduct inference on the expectation and/or the variance. For factors we considered the estimation of the probability of obtaining a level, or, more generally, the probability of the occurrence of an event.

We introduced statistical models that may be used to describe the relations between variables. One of the variables was designated as the response. The other variable, the explanatory variable, is identified as a variable which may affect the distribution of the response. Specifically, we considered numeric variables and factors that have two levels. If the explanatory variable is a factor with two levels then the analysis reduces to the comparison of two sub-populations, each one associated with a level. If the explanatory variable is numeric then a regression model may be applied, either linear or logistic regression, depending on the type of the response.

The foundations of statistical inference are the assumption that we make in the form of statistical models. These models attempt to reflect reality. However, one is advised to apply healthy skepticism when using the models. First, one should be aware what the assumptions are. Then one should ask oneself how reasonable are these assumption in the context of the specific analysis. Finally, one should check as much as one can the validity of the assumptions in light of the information at hand. It is useful to plot the data and compare the plot to the assumptions of the model.

16.3 Case Studies

Let us apply the methods that were introduced throughout the book to two examples of data analysis. Both examples are taken from the case studies of the Rice Virtual Lab in Statistics can be found in their Case Studies section. The analysis of these case studies may involve any of the tools that were described in the second part of the book (and some from the first part). It may be useful to read again Chapters 9 – 15 before reading the case studies.

16.3.1 Physicians’ Reactions to the Size of a Patient

Overweight and obesity is common in many of the developed contrives. In some cultures, obese individuals face discrimination in employment, education, and relationship contexts. The current research, conducted by Mikki Hebl and Jingping Xu 87 , examines physicians’ attitude toward overweight and obese patients in comparison to their attitude toward patients who are not overweight.

The experiment included a total of 122 primary care physicians affiliated with one of three major hospitals in the Texas Medical Center of Houston. These physicians were sent a packet containing a medical chart similar to the one they view upon seeing a patient. This chart portrayed a patient who was displaying symptoms of a migraine headache but was otherwise healthy. Two variables (the gender and the weight of the patient) were manipulated across six different versions of the medical charts. The weight of the patient, described in terms of Body Mass Index (BMI), was average (BMI = 23), overweight (BMI = 30), or obese (BMI = 36). Physicians were randomly assigned to receive one of the six charts, and were asked to look over the chart carefully and complete two medical forms. The first form asked physicians which of 42 tests they would recommend giving to the patient. The second form asked physicians to indicate how much time they believed they would spend with the patient, and to describe the reactions that they would have toward this patient.

In this presentation, only the question on how much time the physicians believed they would spend with the patient is analyzed. Although three patient weight conditions were used in the study (average, overweight, and obese) only the average and overweight conditions will be analyzed. Therefore, there are two levels of patient weight (average and overweight) and one dependent variable (time spent).

The data for the given collection of responses from 72 primary care physicians is stored in the file “ discriminate.csv ” 88 . We start by reading the content of the file into a data frame by the name “ patient ” and presenting the summary of the variables:

Observe that of the 72 “patients”, 38 are overweight and 33 have an average weight. The time spend with the patient, as predicted by physicians, is distributed between 5 minutes and 1 hour, with a average of 27.82 minutes and a median of 30 minutes.

It is a good practice to have a look at the data before doing the analysis. In this examination on should see that the numbers make sense and one should identify special features of the data. Even in this very simple example we may want to have a look at the histogram of the variable “ time ”:

A feature in this plot that catches attention is the fact that there is a high concventration of values in the interval between 25 and 30. Together with the fact that the median is equal to 30, one may suspect that, as a matter of fact, a large numeber of the values are actually equal to 30. Indeed, let us produce a table of the response:

Notice that 30 of the 72 physicians marked “ 30 ” as the time they expect to spend with the patient. This is the middle value in the range, and may just be the default value one marks if one just needs to complete a form and do not really place much importance to the question that was asked.

The goal of the analysis is to examine the relation between overweigh and the Doctor’s response. The explanatory variable is a factor with two levels. The response is numeric. A natural tool to use in order to test this hypothesis is the \(t\) -test, which is implemented with the function “ t.test ”.

First we plot the relation between the response and the explanatory variable and then we apply the test:

Nothing seems problematic in the box plot. The two distributions, as they are reflected in the box plots, look fairly symmetric.

When we consider the report that produced by the function “ t.test ” we may observe that the \(p\) -value is equal to 0.005774. This \(p\) -value is computed in testing the null hypothesis that the expectation of the response for both types of patients are equal against the two sided alternative. Since the \(p\) -value is less than 0.05 we do reject the null hypothesis.

The estimated value of the difference between the expectation of the response for a patient with BMI=23 and a patient with BMI=30 is \(31.36364 -24.73684 \approx 6.63\) minutes. The confidence interval is (approximately) equal to \([1.99, 11.27]\) . Hence, it looks as if the physicians expect to spend more time with the average weight patients.

After analyzing the effect of the explanatory variable on the expectation of the response one may want to examine the presence, or lack thereof, of such effect on the variance of the response. Towards that end, one may use the function “ var.test ”:

In this test we do not reject the null hypothesis that the two variances of the response are equal since the \(p\) -value is larger than \(0.05\) . The sample variances are almost equal to each other (their ratio is \(1.044316\) ), with a confidence interval for the ration that essentially ranges between 1/2 and 2.

The production of \(p\) -values and confidence intervals is just one aspect in the analysis of data. Another aspect, which typically is much more time consuming and requires experience and healthy skepticism is the examination of the assumptions that are used in order to produce the \(p\) -values and the confidence intervals. A clear violation of the assumptions may warn the statistician that perhaps the computed nominal quantities do not represent the actual statistical properties of the tools that were applied.

In this case, we have noticed the high concentration of the response at the value “ 30 ”. What is the situation when we split the sample between the two levels of the explanatory variable? Let us apply the function “ table ” once more, this time with the explanatory variable included:

Not surprisingly, there is still high concentration at that level “ 30 ”. But one can see that only 2 of the responses of the “ BMI=30 ” group are above that value in comparison to a much more symmetric distribution of responses for the other group.

The simulations of the significance level of the one-sample \(t\) -test for an Exponential response that were conducted in Question \[ex:Testing.2\] may cast some doubt on how trustworthy are nominal \(p\) -values of the \(t\) -test when the measurements are skewed. The skewness of the response for the group “ BMI=30 ” is a reason to be worry.

We may consider a different test, which is more robust, in order to validate the significance of our findings. For example, we may turn the response into a factor by setting a level for values larger or equal to “ 30 ” and a different level for values less than “ 30 ”. The relation between the new response and the explanatory variable can be examined with the function “ prop.test ”. We first plot and then test:

The mosaic plot presents the relation between the explanatory variable and the new factor. The level “ TRUE ” is associated with a value of the predicted time spent with the patient being 30 minutes or more. The level “ FALSE ” is associated with a prediction of less than 30 minutes.

The computed \(p\) -value is equal to \(0.05409\) , that almost reaches the significance level of 5% 89 . Notice that the probabilities that are being estimated by the function are the probabilities of the level “ FALSE ”. Overall, one may see the outcome of this test as supporting evidence for the conclusion of the \(t\) -test. However, the \(p\) -value provided by the \(t\) -test may over emphasize the evidence in the data for a significant difference in the physician attitude towards overweight patients.

16.3.2 Physical Strength and Job Performance

The next case study involves an attempt to develop a measure of physical ability that is easy and quick to administer, does not risk injury, and is related to how well a person performs the actual job. The current example is based on study by Blakely et al. 90 , published in the journal Personnel Psychology.

There are a number of very important jobs that require, in addition to cognitive skills, a significant amount of strength to be able to perform at a high level. Construction worker, electrician and auto mechanic, all require strength in order to carry out critical components of their job. An interesting applied problem is how to select the best candidates from amongst a group of applicants for physically demanding jobs in a safe and a cost effective way.

The data presented in this case study, and may be used for the development of a method for selection among candidates, were collected from 147 individuals working in physically demanding jobs. Two measures of strength were gathered from each participant. These included grip and arm strength. A piece of equipment known as the Jackson Evaluation System (JES) was used to collect the strength data. The JES can be configured to measure the strength of a number of muscle groups. In this study, grip strength and arm strength were measured. The outcomes of these measurements were summarized in two scores of physical strength called “ grip ” and “ arm ”.

Two separate measures of job performance are presented in this case study. First, the supervisors for each of the participants were asked to rate how well their employee(s) perform on the physical aspects of their jobs. This measure is summarizes in the variable “ ratings ”. Second, simulations of physically demanding work tasks were developed. The summary score of these simulations are given in the variable “ sims ”. Higher values of either measures of performance indicates better performance.

The data for the 4 variables and 147 observations is stored in “ job.csv ” 91 . We start by reading the content of the file into a data frame by the name “ job ”, presenting a summary of the variables, and their histograms:

All variables are numeric. Examination of the 4 summaries and histograms does not produce interest findings. All variables are, more or less, symmetric with the distribution of the variable “ ratings ” tending perhaps to be more uniform then the other three.

The main analyses of interest are attempts to relate the two measures of physical strength “ grip ” and “ arm ” with the two measures of job performance, “ ratings ” and “ sims ”. A natural tool to consider in this context is a linear regression analysis that relates a measure of physical strength as an explanatory variable to a measure of job performance as a response.

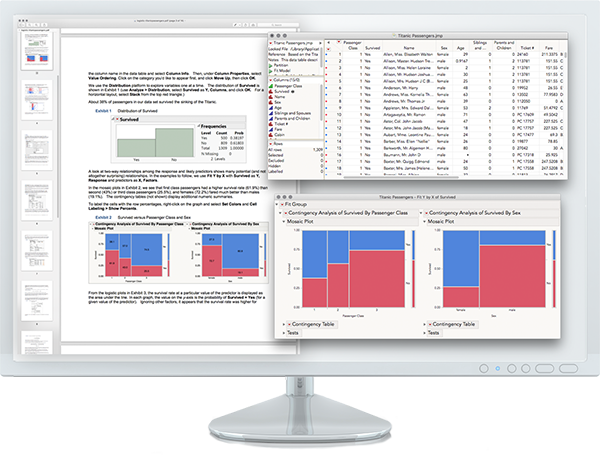

FIGURE 16.1: Scatter Plots and Regression Lines

Let us consider the variable “ sims ” as a response. The first step is to plot a scatter plot of the response and explanatory variable, for both explanatory variables. To the scatter plot we add the line of regression. In order to add the regression line we fit the regression model with the function “ lm ” and then apply the function “ abline ” to the fitted model. The plot for the relation between the response and the variable “ grip ” is produced by the code:

The plot that is produced by this code is presented on the upper-left panel of Figure 16.1 .

The plot for the relation between the response and the variable “ arm ” is produced by this code:

The plot that is produced by the last code is presented on the upper-right panel of Figure 16.1 .

Both plots show similar characteristics. There is an overall linear trend in the relation between the explanatory variable and the response. The value of the response increases with the increase in the value of the explanatory variable (a positive slope). The regression line seems to follow, more or less, the trend that is demonstrated by the scatter plot.

A more detailed analysis of the regression model is possible by the application of the function “ summary ” to the fitted model. First the case where the explanatory variable is “ grip ”:

Examination of the report reviles a clear statistical significance for the effect of the explanatory variable on the distribution of response. The value of R-squared, the ration of the variance of the response explained by the regression is \(0.4094\) . The square root of this quantity, \(\sqrt{0.4094} \approx 0.64\) , is the proportion of the standard deviation of the response that is explained by the explanatory variable. Hence, about 64% of the variability in the response can be attributed to the measure of the strength of the grip.

For the variable “ arm ” we get:

This variable is also statistically significant. The value of R-squared is \(0.4706\) . The proportion of the standard deviation that is explained by the strength of the are is \(\sqrt{0.4706} \approx 0.69\) , which is slightly higher than the proportion explained by the grip.

Overall, the explanatory variables do a fine job in the reduction of the variability of the response “ sims ” and may be used as substitutes of the response in order to select among candidates. A better prediction of the response based on the values of the explanatory variables can be obtained by combining the information in both variables. The production of such combination is not discussed in this book, though it is similar in principle to the methods of linear regression that are presented in Chapter 14 . The produced score 92 takes the form:

\[\mbox{\texttt{score}} = -5.434 + 0.024\cdot \mbox{\texttt{grip}}+ 0.037\cdot \mbox{\texttt{arm}}\;.\] We use this combined score as an explanatory variable. First we form the score and plot the relation between it and the response:

The scatter plot that includes the regression line can be found at the lower-left panel of Figure 16.1 . Indeed, the linear trend is more pronounced for this scatter plot and the regression line a better description of the relation between the response and the explanatory variable. A summary of the regression model produces the report:

Indeed, the score is highly significant. More important, the R-squared coefficient that is associated with the score is \(0.5422\) , which corresponds to a ratio of the standard deviation that is explained by the model of \(\sqrt{0.5422} \approx 0.74\) . Thus, almost 3/4 of the variability is accounted for by the score, so the score is a reasonable mean of guessing what the results of the simulations will be. This guess is based only on the results of the simple tests of strength that is conducted with the JES device.

Before putting the final seal on the results let us examine the assumptions of the statistical model. First, with respect to the two explanatory variables. Does each of them really measure a different property or do they actually measure the same phenomena? In order to examine this question let us look at the scatter plot that describes the relation between the two explanatory variables. This plot is produced using the code:

It is presented in the lower-right panel of Figure 16.1 . Indeed, one may see that the two measurements of strength are not independent of each other but tend to produce an increasing linear trend. Hence, it should not be surprising that the relation of each of them with the response produces essentially the same goodness of fit. The computed score gives a slightly improved fit, but still, it basically reflects either of the original explanatory variables.

In light of this observation, one may want to consider other measures of strength that represents features of the strength not captures by these two variable. Namely, measures that show less joint trend than the two considered.

Another element that should be examined are the probabilistic assumptions that underly the regression model. We described the regression model only in terms of the functional relation between the explanatory variable and the expectation of the response. In the case of linear regression, for example, this relation was given in terms of a linear equation. However, another part of the model corresponds to the distribution of the measurements about the line of regression. The assumption that led to the computation of the reported \(p\) -values is that this distribution is Normal.

A method that can be used in order to investigate the validity of the Normal assumption is to analyze the residuals from the regression line. Recall that these residuals are computed as the difference between the observed value of the response and its estimated expectation, namely the fitted regression line. The residuals can be computed via the application of the function “ residuals ” to the fitted regression model.

Specifically, let us look at the residuals from the regression line that uses the score that is combined from the grip and arm measurements of strength. One may plot a histogram of the residuals:

The produced histogram is represented on the upper panel. The histogram portrays a symmetric distribution that my result from Normally distributed observations. A better method to compare the distribution of the residuals to the Normal distribution is to use the Quantile-Quantile plot . This plot can be found on the lower panel. We do not discuss here the method by which this plot is produced 93 . However, we do say that any deviation of the points from a straight line is indication of violation of the assumption of Normality. In the current case, the points seem to be on a single line, which is consistent with the assumptions of the regression model.

The next task should be an analysis of the relations between the explanatory variables and the other response “ ratings ”. In principle one may use the same steps that were presented for the investigation of the relations between the explanatory variables and the response “ sims ”. But of course, the conclusion may differ. We leave this part of the investigation as an exercise to the students.

16.4 Summary

16.4.1 concluding remarks.

The book included a description of some elements of statistics, element that we thought are simple enough to be explained as part of an introductory course to statistics and are the minimum that is required for any person that is involved in academic activities of any field in which the analysis of data is required. Now, as you finish the book, it is as good time as any to say some words regarding the elements of statistics that are missing from this book.

One element is more of the same. The statistical models that were presented are as simple as a model can get. A typical application will required more complex models. Each of these models may require specific methods for estimation and testing. The characteristics of inference, e.g. significance or confidence levels, rely on assumptions that the models are assumed to possess. The user should be familiar with computational tools that can be used for the analysis of these more complex models. Familiarity with the probabilistic assumptions is required in order to be able to interpret the computer output, to diagnose possible divergence from the assumptions and to assess the severity of the possible effect of such divergence on the validity of the findings.

Statistical tools can be used for tasks other than estimation and hypothesis testing. For example, one may use statistics for prediction. In many applications it is important to assess what the values of future observations may be and in what range of values are they likely to occur. Statistical tools such as regression are natural in this context. However, the required task is not testing or estimation the values of parameters, but the prediction of future values of the response.

A different role of statistics in the design stage. We hinted in that direction when we talked about in Chapter \[ch:Confidence\] about the selection of a sample size in order to assure a confidence interval with a given accuracy. In most applications, the selection of the sample size emerges in the context of hypothesis testing and the criteria for selection is the minimal power of the test, a minimal probability to detect a true finding. Yet, statistical design is much more than the determination of the sample size. Statistics may have a crucial input in the decision of how to collect the data. With an eye on the requirements for the final analysis, an experienced statistician can make sure that data that is collected is indeed appropriate for that final analysis. Too often is the case where researcher steps into the statistician’s office with data that he or she collected and asks, when it is already too late, for help in the analysis of data that cannot provide a satisfactory answer to the research question the researcher tried to address. It may be said, with some exaggeration, that good statisticians are required for the final analysis only in the case where the initial planning was poor.

Last, but not least, is the theoretical mathematical theory of statistics. We tried to introduce as little as possible of the relevant mathematics in this course. However, if one seriously intends to learn and understand statistics then one must become familiar with the relevant mathematical theory. Clearly, deep knowledge in the mathematical theory of probability is required. But apart from that, there is a rich and rapidly growing body of research that deals with the mathematical aspects of data analysis. One cannot be a good statistician unless one becomes familiar with the important aspects of this theory.

I should have started the book with the famous quotation: “Lies, damned lies, and statistics”. Instead, I am using it to end the book. Statistics can be used and can be misused. Learning statistics can give you the tools to tell the difference between the two. My goal in writing the book is achieved if reading it will mark for you the beginning of the process of learning statistics and not the end of the process.

16.4.2 Discussion in the Forum

In the second part of the book we have learned many subjects. Most of these subjects, especially for those that had no previous exposure to statistics, were unfamiliar. In this forum we would like to ask you to share with us the difficulties that you encountered.

What was the topic that was most difficult for you to grasp? In your opinion, what was the source of the difficulty?

When forming your answer to this question we will appreciate if you could elaborate and give details of what the problem was. Pointing to deficiencies in the learning material and confusing explanations will help us improve the presentation for the future editions of this book.

Hebl, M. and Xu, J. (2001). Weighing the care: Physicians’ reactions to the size of a patient. International Journal of Obesity, 25, 1246-1252. ↩

The file can be found on the internet at http://pluto.huji.ac.il/~msby/StatThink/Datasets/discriminate.csv . ↩

One may propose splinting the response into two groups, with one group being associated with values of “ time ” strictly larger than 30 minutes and the other with values less or equal to 30. The resulting \(p\) -value from the expression “ prop.test(table(patient$time>30,patient$weight)) ” is \(0.01276\) . However, the number of subjects in one of the cells of the table is equal only to 2, which is problematic in the context of the Normal approximation that is used by this test. ↩

Blakley, B.A., Qui?ones, M.A., Crawford, M.S., and Jago, I.A. (1994). The validity of isometric strength tests. Personnel Psychology, 47, 247-274. ↩

The file can be found on the internet at http://pluto.huji.ac.il/~msby/StatThink/Datasets/job.csv . ↩

The score is produced by the application of the function “ lm ” to both variables as explanatory variables. The code expression that can be used is “ lm(sims ~ grip + arm, data=job) ”. ↩

Generally speaking, the plot is composed of the empirical percentiles of the residuals, plotted against the theoretical percentiles of the standard Normal distribution. The current plot is produced by the expression “ qqnorm(residuals(sims.score)) ”. ↩

- Skip to secondary menu

- Skip to main content

- Skip to primary sidebar

Statistics By Jim

Making statistics intuitive

What is a Case Study? Definition & Examples

By Jim Frost Leave a Comment

Case Study Definition

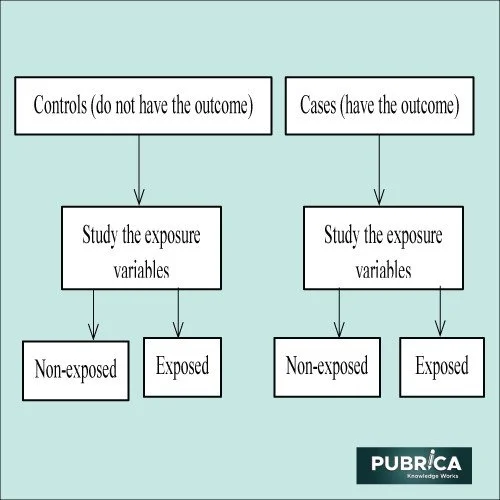

A case study is an in-depth investigation of a single person, group, event, or community. This research method involves intensively analyzing a subject to understand its complexity and context. The richness of a case study comes from its ability to capture detailed, qualitative data that can offer insights into a process or subject matter that other research methods might miss.

A case study strives for a holistic understanding of events or situations by examining all relevant variables. They are ideal for exploring ‘how’ or ‘why’ questions in contexts where the researcher has limited control over events in real-life settings. Unlike narrowly focused experiments, these projects seek a comprehensive understanding of events or situations.

In a case study, researchers gather data through various methods such as participant observation, interviews, tests, record examinations, and writing samples. Unlike statistically-based studies that seek only quantifiable data, a case study attempts to uncover new variables and pose questions for subsequent research.

A case study is particularly beneficial when your research:

- Requires a deep, contextual understanding of a specific case.

- Needs to explore or generate hypotheses rather than test them.

- Focuses on a contemporary phenomenon within a real-life context.

Learn more about Other Types of Experimental Design .

Case Study Examples

Various fields utilize case studies, including the following:

- Social sciences : For understanding complex social phenomena.

- Business : For analyzing corporate strategies and business decisions.

- Healthcare : For detailed patient studies and medical research.

- Education : For understanding educational methods and policies.

- Law : For in-depth analysis of legal cases.

For example, consider a case study in a business setting where a startup struggles to scale. Researchers might examine the startup’s strategies, market conditions, management decisions, and competition. Interviews with the CEO, employees, and customers, alongside an analysis of financial data, could offer insights into the challenges and potential solutions for the startup. This research could serve as a valuable lesson for other emerging businesses.

See below for other examples.

| What impact does urban green space have on mental health in high-density cities? | Assess a green space development in Tokyo and its effects on resident mental health. |

| How do small businesses adapt to rapid technological changes? | Examine a small business in Silicon Valley adapting to new tech trends. |

| What strategies are effective in reducing plastic waste in coastal cities? | Study plastic waste management initiatives in Barcelona. |

| How do educational approaches differ in addressing diverse learning needs? | Investigate a specialized school’s approach to inclusive education in Sweden. |

| How does community involvement influence the success of public health initiatives? | Evaluate a community-led health program in rural India. |

| What are the challenges and successes of renewable energy adoption in developing countries? | Assess solar power implementation in a Kenyan village. |

Types of Case Studies

Several standard types of case studies exist that vary based on the objectives and specific research needs.

Illustrative Case Study : Descriptive in nature, these studies use one or two instances to depict a situation, helping to familiarize the unfamiliar and establish a common understanding of the topic.

Exploratory Case Study : Conducted as precursors to large-scale investigations, they assist in raising relevant questions, choosing measurement types, and identifying hypotheses to test.

Cumulative Case Study : These studies compile information from various sources over time to enhance generalization without the need for costly, repetitive new studies.

Critical Instance Case Study : Focused on specific sites, they either explore unique situations with limited generalizability or challenge broad assertions, to identify potential cause-and-effect issues.

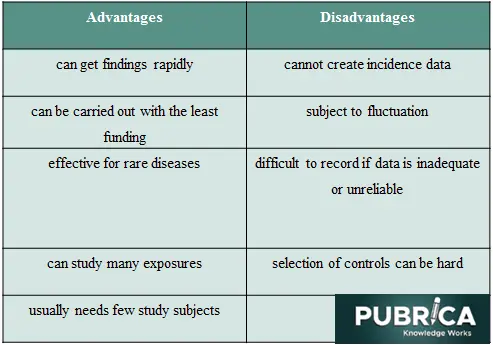

Pros and Cons

As with any research study, case studies have a set of benefits and drawbacks.

- Provides comprehensive and detailed data.

- Offers a real-life perspective.

- Flexible and can adapt to discoveries during the study.

- Enables investigation of scenarios that are hard to assess in laboratory settings.

- Facilitates studying rare or unique cases.

- Generates hypotheses for future experimental research.

- Time-consuming and may require a lot of resources.

- Hard to generalize findings to a broader context.

- Potential for researcher bias.

- Cannot establish causality .

- Lacks scientific rigor compared to more controlled research methods .

Crafting a Good Case Study: Methodology

While case studies emphasize specific details over broad theories, they should connect to theoretical frameworks in the field. This approach ensures that these projects contribute to the existing body of knowledge on the subject, rather than standing as an isolated entity.

The following are critical steps in developing a case study:

- Define the Research Questions : Clearly outline what you want to explore. Define specific, achievable objectives.

- Select the Case : Choose a case that best suits the research questions. Consider using a typical case for general understanding or an atypical subject for unique insights.

- Data Collection : Use a variety of data sources, such as interviews, observations, documents, and archival records, to provide multiple perspectives on the issue.

- Data Analysis : Identify patterns and themes in the data.

- Report Findings : Present the findings in a structured and clear manner.

Analysts typically use thematic analysis to identify patterns and themes within the data and compare different cases.

- Qualitative Analysis : Such as coding and thematic analysis for narrative data.

- Quantitative Analysis : In cases where numerical data is involved.

- Triangulation : Combining multiple methods or data sources to enhance accuracy.

A good case study requires a balanced approach, often using both qualitative and quantitative methods.

The researcher should constantly reflect on their biases and how they might influence the research. Documenting personal reflections can provide transparency.

Avoid over-generalization. One common mistake is to overstate the implications of a case study. Remember that these studies provide an in-depth insights into a specific case and might not be widely applicable.

Don’t ignore contradictory data. All data, even that which contradicts your hypothesis, is valuable. Ignoring it can lead to skewed results.

Finally, in the report, researchers provide comprehensive insight for a case study through “thick description,” which entails a detailed portrayal of the subject, its usage context, the attributes of involved individuals, and the community environment. Thick description extends to interpreting various data, including demographic details, cultural norms, societal values, prevailing attitudes, and underlying motivations. This approach ensures a nuanced and in-depth comprehension of the case in question.

Learn more about Qualitative Research and Qualitative vs. Quantitative Data .

Morland, J. & Feagin, Joe & Orum, Anthony & Sjoberg, Gideon. (1992). A Case for the Case Study . Social Forces. 71(1):240.

Share this:

Reader Interactions

Comments and questions cancel reply.

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now .

- Advanced Search

- Journal List

- Sage Choice

- PMC11334375

Methodologic and Data-Analysis Triangulation in Case Studies: A Scoping Review

Margarithe charlotte schlunegger.

1 Department of Health Professions, Applied Research & Development in Nursing, Bern University of Applied Sciences, Bern, Switzerland

2 Faculty of Health, School of Nursing Science, Witten/Herdecke University, Witten, Germany

Maya Zumstein-Shaha

Rebecca palm.

3 Department of Health Care Research, Carl von Ossietzky University Oldenburg, Oldenburg, Germany

Associated Data

Supplemental material, sj-docx-1-wjn-10.1177_01939459241263011 for Methodologic and Data-Analysis Triangulation in Case Studies: A Scoping Review by Margarithe Charlotte Schlunegger, Maya Zumstein-Shaha and Rebecca Palm in Western Journal of Nursing Research

We sought to explore the processes of methodologic and data-analysis triangulation in case studies using the example of research on nurse practitioners in primary health care.

Design and methods:

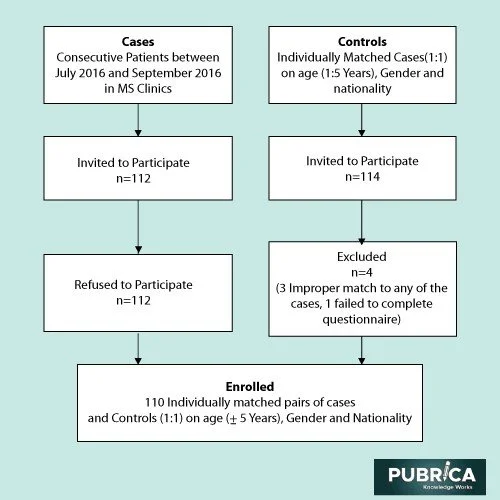

We conducted a scoping review within Arksey and O’Malley’s methodological framework, considering studies that defined a case study design and used 2 or more data sources, published in English or German before August 2023.

Data sources:

The databases searched were MEDLINE and CINAHL, supplemented with hand searching of relevant nursing journals. We also examined the reference list of all the included studies.

In total, 63 reports were assessed for eligibility. Ultimately, we included 8 articles. Five studies described within-method triangulation, whereas 3 provided information on between/across-method triangulation. No study reported within-method triangulation of 2 or more quantitative data-collection procedures. The data-collection procedures were interviews, observation, documentation/documents, service records, and questionnaires/assessments. The data-analysis triangulation involved various qualitative and quantitative methods of analysis. Details about comparing or contrasting results from different qualitative and mixed-methods data were lacking.

Conclusions:

Various processes for methodologic and data-analysis triangulation are described in this scoping review but lack detail, thus hampering standardization in case study research, potentially affecting research traceability. Triangulation is complicated by terminological confusion. To advance case study research in nursing, authors should reflect critically on the processes of triangulation and employ existing tools, like a protocol or mixed-methods matrix, for transparent reporting. The only existing reporting guideline should be complemented with directions on methodologic and data-analysis triangulation.

Case study research is defined as “an empirical method that investigates a contemporary phenomenon (the ‘case’) in depth and within its real-world context, especially when the boundaries between phenomenon and context may not be clearly evident. A case study relies on multiple sources of evidence, with data needing to converge in a triangulating fashion.” 1 (p15) This design is described as a stand-alone research approach equivalent to grounded theory and can entail single and multiple cases. 1 , 2 However, case study research should not be confused with single clinical case reports. “Case reports are familiar ways of sharing events of intervening with single patients with previously unreported features.” 3 (p107) As a methodology, case study research encompasses substantially more complexity than a typical clinical case report. 1 , 3

A particular characteristic of case study research is the use of various data sources, such as quantitative data originating from questionnaires as well as qualitative data emerging from interviews, observations, or documents. Therefore, a case study always draws on multiple sources of evidence, and the data must converge in a triangulating manner. 1 When using multiple data sources, a case or cases can be examined more convincingly and accurately, compensating for the weaknesses of the respective data sources. 1 Another characteristic is the interaction of various perspectives. This involves comparing or contrasting perspectives of people with different points of view, eg, patients, staff, or leaders. 4 Through triangulation, case studies contribute to the completeness of the research on complex topics, such as role implementation in clinical practice. 1 , 5 Triangulation involves a combination of researchers from various disciplines, of theories, of methods, and/or of data sources. By creating connections between these sources (ie, investigator, theories, methods, data sources, and/or data analysis), a new understanding of the phenomenon under study can be obtained. 6 , 7

This scoping review focuses on methodologic and data-analysis triangulation because concrete procedures are missing, eg, in reporting guidelines. Methodologic triangulation has been called methods, mixed methods, or multimethods. 6 It can encompass within-method triangulation and between/across-method triangulation. 7 “Researchers using within-method triangulation use at least 2 data-collection procedures from the same design approach.” 6 (p254) Within-method triangulation is either qualitative or quantitative but not both. Therefore, within-method triangulation can also be considered data source triangulation. 8 In contrast, “researchers using between/across-method triangulation employ both qualitative and quantitative data-collection methods in the same study.” 6 (p254) Hence, methodologic approaches are combined as well as various data sources. For this scoping review, the term “methodologic triangulation” is maintained to denote between/across-method triangulation. “Data-analysis triangulation is the combination of 2 or more methods of analyzing data.” 6 (p254)

Although much has been published on case studies, there is little consensus on the quality of the various data sources, the most appropriate methods, or the procedures for conducting methodologic and data-analysis triangulation. 5 According to the EQUATOR (Enhancing the QUAlity and Transparency Of health Research) clearinghouse for reporting guidelines, one standard exists for organizational case studies. 9 Organizational case studies provide insights into organizational change in health care services. 9 Rodgers et al 9 pointed out that, although high-quality studies are being funded and published, they are sometimes poorly articulated and methodologically inadequate. In the reporting checklist by Rodgers et al, 9 a description of the data collection is included, but reporting directions on methodologic and data-analysis triangulation are missing. Therefore, the purpose of this study was to examine the process of methodologic and data-analysis triangulation in case studies. Accordingly, we conducted a scoping review to elicit descriptions of and directions for triangulation methods and analysis, drawing on case studies of nurse practitioners (NPs) in primary health care as an example. Case studies are recommended to evaluate the implementation of new roles in (primary) health care, such as that of NPs. 1 , 5 Case studies on new role implementation can generate a unique and in-depth understanding of specific roles (individual), teams (smaller groups), family practices or similar institutions (organization), and social and political processes in health care systems. 1 , 10 The integration of NPs into health care systems is at different stages of progress around the world. 11 Therefore, studies are needed to evaluate this process.

The methodological framework by Arksey and O’Malley 12 guided this scoping review. We examined the current scientific literature on the use of methodologic and data-analysis triangulation in case studies on NPs in primary health care. The review process included the following stages: (1) establishing the research question; (2) identifying relevant studies; (3) selecting the studies for inclusion; (4) charting the data; (5) collating, summarizing, and reporting the results; and (6) consulting experts in the field. 12 Stage 6 was not performed due to a lack of financial resources. The reporting of the review followed the PRISMA-ScR (Preferred Reporting Items for Systematic Reviews and Meta-Analyses extension for Scoping Review) guideline by Tricco et al 13 (guidelines for reporting systematic reviews and meta-analyses [ Supplementary Table A ]). Scoping reviews are not eligible for registration in PROSPERO.

Stage 1: Establishing the Research Question

The aim of this scoping review was to examine the process of triangulating methods and analysis in case studies on NPs in primary health care to improve the reporting. We sought to answer the following question: How have methodologic and data-analysis triangulation been conducted in case studies on NPs in primary health care? To answer the research question, we examined the following elements of the selected studies: the research question, the study design, the case definition, the selected data sources, and the methodologic and data-analysis triangulation.

Stage 2: Identifying Relevant Studies

A systematic database search was performed in the MEDLINE (via PubMed) and CINAHL (via EBSCO) databases between July and September 2020 to identify relevant articles. The following terms were used as keyword search strategies: (“Advanced Practice Nursing” OR “nurse practitioners”) AND (“primary health care” OR “Primary Care Nursing”) AND (“case study” OR “case studies”). Searches were limited to English- and German-language articles. Hand searches were conducted in the journals Nursing Inquiry , BMJ Open , and BioMed Central ( BMC ). We also screened the reference lists of the studies included. The database search was updated in August 2023. The complete search strategy for all the databases is presented in Supplementary Table B .

Stage 3: Selecting the Studies

Inclusion and exclusion criteria.

We used the inclusion and exclusion criteria reported in Table 1 . We included studies of NPs who had at least a master’s degree in nursing according to the definition of the International Council of Nurses. 14 This scoping review considered studies that were conducted in primary health care practices in rural, urban, and suburban regions. We excluded reviews and study protocols in which no data collection had occurred. Articles were included without limitations on the time period or country of origin.

Inclusion and Exclusion Criteria.

| Criteria | Inclusion | Exclusion |

|---|---|---|

| Population | - NPs with a master’s degree in nursing or higher | - Nurses with a bachelor’s degree in nursing or lower - Pre-registration nursing students - No definition of master’s degree in nursing described in the publication |

| Interest | - Description/definition of a case study design - Two or more data sources | - Reviews - Study protocols - Summaries/comments/discussions |

| Context | - Primary health care - Family practices and home visits (including adult practices, internal medicine practices, community health centers) | - Nursing homes, hospital, hospice |

Screening process

After the search, we collated and uploaded all the identified records into EndNote v.X8 (Clarivate Analytics, Philadelphia, Pennsylvania) and removed any duplicates. Two independent reviewers (MCS and SA) screened the titles and abstracts for assessment in line with the inclusion criteria. They retrieved and assessed the full texts of the selected studies while applying the inclusion criteria. Any disagreements about the eligibility of studies were resolved by discussion or, if no consensus could be reached, by involving experienced researchers (MZ-S and RP).

Stages 4 and 5: Charting the Data and Collating, Summarizing, and Reporting the Results

The first reviewer (MCS) extracted data from the selected publications. For this purpose, an extraction tool developed by the authors was used. This tool comprised the following criteria: author(s), year of publication, country, research question, design, case definition, data sources, and methodologic and data-analysis triangulation. First, we extracted and summarized information about the case study design. Second, we narratively summarized the way in which the data and methodological triangulation were described. Finally, we summarized the information on within-case or cross-case analysis. This process was performed using Microsoft Excel. One reviewer (MCS) extracted data, whereas another reviewer (SA) cross-checked the data extraction, making suggestions for additions or edits. Any disagreements between the reviewers were resolved through discussion.

A total of 149 records were identified in 2 databases. We removed 20 duplicates and screened 129 reports by title and abstract. A total of 46 reports were assessed for eligibility. Through hand searches, we identified 117 additional records. Of these, we excluded 98 reports after title and abstract screening. A total of 17 reports were assessed for eligibility. From the 2 databases and the hand search, 63 reports were assessed for eligibility. Ultimately, we included 8 articles for data extraction. No further articles were included after the reference list screening of the included studies. A PRISMA flow diagram of the study selection and inclusion process is presented in Figure 1 . As shown in Tables 2 and and3, 3 , the articles included in this scoping review were published between 2010 and 2022 in Canada (n = 3), the United States (n = 2), Australia (n = 2), and Scotland (n = 1).

PRISMA flow diagram.

Characteristics of Articles Included.

| Author | Contandriopoulos et al | Flinter | Hogan et al | Hungerford et al | O’Rourke | Roots and MacDonald | Schadewaldt et al | Strachan et al |

|---|---|---|---|---|---|---|---|---|

| Country | Canada | The United States | The United States | Australia | Canada | Canada | Australia | Scotland |

| How or why research question | No information on the research question | Several how or why research questions | What and how research question | No information on the research question | Several how or why research questions | No information on the research question | What research question | What and why research questions |

| Design and referenced author of methodological guidance | Six qualitative case studies Robert K. Yin | Multiple-case studies design Robert K. Yin | Multiple-case studies design Robert E. Stake | Case study design Robert K. Yin | Qualitative single-case study Robert K. Yin Robert E. Stake Sharan Merriam | Single-case study design Robert K. Yin Sharan Merriam | Multiple-case studies design Robert K. Yin Robert E. Stake | Multiple-case studies design |

| Case definition | Team of health professionals (Small group) | Nurse practitioners (Individuals) | Primary care practices (Organization) | Community-based NP model of practice (Organization) | NP-led practice (Organization) | Primary care practices (Organization) | No information on case definition | Health board (Organization) |

Overview of Within-Method, Between/Across-Method, and Data-Analysis Triangulation.

| Author | Contandriopoulos et al | Flinter | Hogan et al | Hungerford et al | O’Rourke | Roots and MacDonald | Schadewaldt et al | Strachan et al |

|---|---|---|---|---|---|---|---|---|

| Within-method triangulation (using within-method triangulation use at least 2 data-collection procedures from the same design approach) | ||||||||

| : | ||||||||

| Interviews | X | x | x | x | x | |||

| Observations | x | x | ||||||

| Public documents | x | x | x | |||||

| Electronic health records | x | |||||||

| Between/across-method (using both qualitative and quantitative data-collection procedures in the same study) | ||||||||

| : | ||||||||

| : | ||||||||

| Interviews | x | x | x | |||||

| Observations | x | x | ||||||

| Public documents | x | x | ||||||

| Electronic health records | x | |||||||

| : | ||||||||

| Self-assessment | x | |||||||

| Service records | x | |||||||

| Questionnaires | x | |||||||

| Data-analysis triangulation (combination of 2 or more methods of analyzing data) | ||||||||

| : | ||||||||

| : | ||||||||

| Deductive | x | x | x | |||||

| Inductive | x | x | ||||||

| Thematic | x | x | ||||||

| Content | ||||||||

| : | ||||||||

| Descriptive analysis | x | x | x | |||||

| : | ||||||||

| : | ||||||||

| Deductive | x | x | x | x | ||||

| Inductive | x | x | ||||||

| Thematic | x | |||||||

| Content | x | |||||||

Research Question, Case Definition, and Case Study Design

The following sections describe the research question, case definition, and case study design. Case studies are most appropriate when asking “how” or “why” questions. 1 According to Yin, 1 how and why questions are explanatory and lead to the use of case studies, histories, and experiments as the preferred research methods. In 1 study from Canada, eg, the following research question was presented: “How and why did stakeholders participate in the system change process that led to the introduction of the first nurse practitioner-led Clinic in Ontario?” (p7) 19 Once the research question has been formulated, the case should be defined and, subsequently, the case study design chosen. 1 In typical case studies with mixed methods, the 2 types of data are gathered concurrently in a convergent design and the results merged to examine a case and/or compare multiple cases. 10

Research question

“How” or “why” questions were found in 4 studies. 16 , 17 , 19 , 22 Two studies additionally asked “what” questions. Three studies described an exploratory approach, and 1 study presented an explanatory approach. Of these 4 studies, 3 studies chose a qualitative approach 17 , 19 , 22 and 1 opted for mixed methods with a convergent design. 16

In the remaining studies, either the research questions were not clearly stated or no “how” or “why” questions were formulated. For example, “what” questions were found in 1 study. 21 No information was provided on exploratory, descriptive, and explanatory approaches. Schadewaldt et al 21 chose mixed methods with a convergent design.

Case definition and case study design

A total of 5 studies defined the case as an organizational unit. 17 , 18 - 20 , 22 Of the 8 articles, 4 reported multiple-case studies. 16 , 17 , 22 , 23 Another 2 publications involved single-case studies. 19 , 20 Moreover, 2 publications did not state the case study design explicitly.

Within-Method Triangulation

This section describes within-method triangulation, which involves employing at least 2 data-collection procedures within the same design approach. 6 , 7 This can also be called data source triangulation. 8 Next, we present the single data-collection procedures in detail. In 5 studies, information on within-method triangulation was found. 15 , 17 - 19 , 22 Studies describing a quantitative approach and the triangulation of 2 or more quantitative data-collection procedures could not be included in this scoping review.

Qualitative approach

Five studies used qualitative data-collection procedures. Two studies combined face-to-face interviews and documents. 15 , 19 One study mixed in-depth interviews with observations, 18 and 1 study combined face-to-face interviews and documentation. 22 One study contained face-to-face interviews, observations, and documentation. 17 The combination of different qualitative data-collection procedures was used to present the case context in an authentic and complex way, to elicit the perspectives of the participants, and to obtain a holistic description and explanation of the cases under study.

All 5 studies used qualitative interviews as the primary data-collection procedure. 15 , 17 - 19 , 22 Face-to-face, in-depth, and semi-structured interviews were conducted. The topics covered in the interviews included processes in the introduction of new care services and experiences of barriers and facilitators to collaborative work in general practices. Two studies did not specify the type of interviews conducted and did not report sample questions. 15 , 18

Observations

In 2 studies, qualitative observations were carried out. 17 , 18 During the observations, the physical design of the clinical patients’ rooms and office spaces was examined. 17 Hungerford et al 18 did not explain what information was collected during the observations. In both studies, the type of observation was not specified. Observations were generally recorded as field notes.

Public documents

In 3 studies, various qualitative public documents were studied. 15 , 19 , 22 These documents included role description, education curriculum, governance frameworks, websites, and newspapers with information about the implementation of the role and general practice. Only 1 study failed to specify the type of document and the collected data. 15

Electronic health records

In 1 study, qualitative documentation was investigated. 17 This included a review of dashboards (eg, provider productivity reports or provider quality dashboards in the electronic health record) and quality performance reports (eg, practice-wide or co-management team-wide performance reports).

Between/Across-Method Triangulation

This section describes the between/across methods, which involve employing both qualitative and quantitative data-collection procedures in the same study. 6 , 7 This procedure can also be denoted “methodologic triangulation.” 8 Subsequently, we present the individual data-collection procedures. In 3 studies, information on between/across triangulation was found. 16 , 20 , 21

Mixed methods

Three studies used qualitative and quantitative data-collection procedures. One study combined face-to-face interviews, documentation, and self-assessments. 16 One study employed semi-structured interviews, direct observation, documents, and service records, 20 and another study combined face-to-face interviews, non-participant observation, documents, and questionnaires. 23

All 3 studies used qualitative interviews as the primary data-collection procedure. 16 , 20 , 23 Face-to-face and semi-structured interviews were conducted. In the interviews, data were collected on the introduction of new care services and experiences of barriers to and facilitators of collaborative work in general practices.

Observation

In 2 studies, direct and non-participant qualitative observations were conducted. 20 , 23 During the observations, the interaction between health professionals or the organization and the clinical context was observed. Observations were generally recorded as field notes.

In 2 studies, various qualitative public documents were examined. 20 , 23 These documents included role description, newspapers, websites, and practice documents (eg, flyers). In the documents, information on the role implementation and role description of NPs was collected.

Individual journals

In 1 study, qualitative individual journals were studied. 16 These included reflective journals from NPs, who performed the role in primary health care.

Service records

Only 1 study involved quantitative service records. 20 These service records were obtained from the primary care practices and the respective health authorities. They were collected before and after the implementation of an NP role to identify changes in patients’ access to health care, the volume of patients served, and patients’ use of acute care services.

Questionnaires/Assessment

In 2 studies, quantitative questionnaires were used to gather information about the teams’ satisfaction with collaboration. 16 , 21 In 1 study, 3 validated scales were used. The scales measured experience, satisfaction, and belief in the benefits of collaboration. 21 Psychometric performance indicators of these scales were provided. However, the time points of data collection were not specified; similarly, whether the questionnaires were completed online or by hand was not mentioned. A competency self-assessment tool was used in another study. 16 The assessment comprised 70 items and included topics such as health promotion, protection, disease prevention and treatment, the NP-patient relationship, the teaching-coaching function, the professional role, managing and negotiating health care delivery systems, monitoring and ensuring the quality of health care practice, and cultural competence. Psychometric performance indicators were provided. The assessment was completed online with 2 measurement time points (pre self-assessment and post self-assessment).

Data-Analysis Triangulation

This section describes data-analysis triangulation, which involves the combination of 2 or more methods of analyzing data. 6 Subsequently, we present within-case analysis and cross-case analysis.

Mixed-methods analysis

Three studies combined qualitative and quantitative methods of analysis. 16 , 20 , 21 Two studies involved deductive and inductive qualitative analysis, and qualitative data were analyzed thematically. 20 , 21 One used deductive qualitative analysis. 16 The method of analysis was not specified in the studies. Quantitative data were analyzed using descriptive statistics in 3 studies. 16 , 20 , 23 The descriptive statistics comprised the calculation of the mean, median, and frequencies.

Qualitative methods of analysis

Two studies combined deductive and inductive qualitative analysis, 19 , 22 and 2 studies only used deductive qualitative analysis. 15 , 18 Qualitative data were analyzed thematically in 1 study, 22 and data were treated with content analysis in the other. 19 The method of analysis was not specified in the 2 studies.

Within-case analysis

In 7 studies, a within-case analysis was performed. 15 - 20 , 22 Six studies used qualitative data for the within-case analysis, and 1 study employed qualitative and quantitative data. Data were analyzed separately, consecutively, or in parallel. The themes generated from qualitative data were compared and then summarized. The individual cases were presented mostly as a narrative description. Quantitative data were integrated into the qualitative description with tables and graphs. Qualitative and quantitative data were also presented as a narrative description.

Cross-case analyses

Of the multiple-case studies, 5 carried out cross-case analyses. 15 - 17 , 20 , 22 Three studies described the cross-case analysis using qualitative data. Two studies reported a combination of qualitative and quantitative data for the cross-case analysis. In each multiple-case study, the individual cases were contrasted to identify the differences and similarities between the cases. One study did not specify whether a within-case or a cross-case analysis was conducted. 23

Confirmation or contradiction of data

This section describes confirmation or contradiction through qualitative and quantitative data. 1 , 4 Qualitative and quantitative data were reported separately, with little connection between them. As a result, the conclusions on neither the comparisons nor the contradictions could be clearly determined.

Confirmation or contradiction among qualitative data

In 3 studies, the consistency of the results of different types of qualitative data was highlighted. 16 , 19 , 21 In particular, documentation and interviews or interviews and observations were contrasted:

- Confirmation between interviews and documentation: The data from these sources corroborated the existence of a common vision for an NP-led clinic. 19

- Confirmation among interviews and observation: NPs experienced pressure to find and maintain their position within the existing system. Nurse practitioners and general practitioners performed complete episodes of care, each without collaborative interaction. 21

- Contradiction among interviews and documentation: For example, interviewees mentioned that differentiating the scope of practice between NPs and physicians is difficult as there are too many areas of overlap. However, a clear description of the scope of practice for the 2 roles was provided. 21

Confirmation through a combination of qualitative and quantitative data

Both types of data showed that NPs and general practitioners wanted to have more time in common to discuss patient cases and engage in personal exchanges. 21 In addition, the qualitative and quantitative data confirmed the individual progression of NPs from less competent to more competent. 16 One study pointed out that qualitative and quantitative data obtained similar results for the cases. 20 For example, integrating NPs improved patient access by increasing appointment availability.

Contradiction through a combination of qualitative and quantitative data

Although questionnaire results indicated that NPs and general practitioners experienced high levels of collaboration and satisfaction with the collaborative relationship, the qualitative results drew a more ambivalent picture of NPs’ and general practitioners’ experiences with collaboration. 21

Research Question and Design

The studies included in this scoping review evidenced various research questions. The recommended formats (ie, how or why questions) were not applied consistently. Therefore, no case study design should be applied because the research question is the major guide for determining the research design. 2 Furthermore, case definitions and designs were applied variably. The lack of standardization is reflected in differences in the reporting of these case studies. Generally, case study research is viewed as allowing much more freedom and flexibility. 5 , 24 However, this flexibility and the lack of uniform specifications lead to confusion.

Methodologic Triangulation

Methodologic triangulation, as described in the literature, can be somewhat confusing as it can refer to either data-collection methods or research designs. 6 , 8 For example, methodologic triangulation can allude to qualitative and quantitative methods, indicating a paradigmatic connection. Methodologic triangulation can also point to qualitative and quantitative data-collection methods, analysis, and interpretation without specific philosophical stances. 6 , 8 Regarding “data-collection methods with no philosophical stances,” we would recommend using the wording “data source triangulation” instead. Thus, the demarcation between the method and the data-collection procedures will be clearer.

Within-Method and Between/Across-Method Triangulation

Yin 1 advocated the use of multiple sources of evidence so that a case or cases can be investigated more comprehensively and accurately. Most studies included multiple data-collection procedures. Five studies employed a variety of qualitative data-collection procedures, and 3 studies used qualitative and quantitative data-collection procedures (mixed methods). In contrast, no study contained 2 or more quantitative data-collection procedures. In particular, quantitative data-collection procedures—such as validated, reliable questionnaires, scales, or assessments—were not used exhaustively. The prerequisites for using multiple data-collection procedures are availability, the knowledge and skill of the researcher, and sufficient financial funds. 1 To meet these prerequisites, research teams consisting of members with different levels of training and experience are necessary. Multidisciplinary research teams need to be aware of the strengths and weaknesses of different data sources and collection procedures. 1

Qualitative methods of analysis and results

When using multiple data sources and analysis methods, it is necessary to present the results in a coherent manner. Although the importance of multiple data sources and analysis has been emphasized, 1 , 5 the description of triangulation has tended to be brief. Thus, traceability of the research process is not always ensured. The sparse description of the data-analysis triangulation procedure may be due to the limited number of words in publications or the complexity involved in merging the different data sources.

Only a few concrete recommendations regarding the operationalization of the data-analysis triangulation with the qualitative data process were found. 25 A total of 3 approaches have been proposed 25 : (1) the intuitive approach, in which researchers intuitively connect information from different data sources; (2) the procedural approach, in which each comparative or contrasting step in triangulation is documented to ensure transparency and replicability; and (3) the intersubjective approach, which necessitates a group of researchers agreeing on the steps in the triangulation process. For each case study, one of these 3 approaches needs to be selected, carefully carried out, and documented. Thus, in-depth examination of the data can take place. Farmer et al 25 concluded that most researchers take the intuitive approach; therefore, triangulation is not clearly articulated. This trend is also evident in our scoping review.

Mixed-methods analysis and results

Few studies in this scoping review used a combination of qualitative and quantitative analysis. However, creating a comprehensive stand-alone picture of a case from both qualitative and quantitative methods is challenging. Findings derived from different data types may not automatically coalesce into a coherent whole. 4 O’Cathain et al 26 described 3 techniques for combining the results of qualitative and quantitative methods: (1) developing a triangulation protocol; (2) following a thread by selecting a theme from 1 component and following it across the other components; and (3) developing a mixed-methods matrix.

The most detailed description of the conducting of triangulation is the triangulation protocol. The triangulation protocol takes place at the interpretation stage of the research process. 26 This protocol was developed for multiple qualitative data but can also be applied to a combination of qualitative and quantitative data. 25 , 26 It is possible to determine agreement, partial agreement, “silence,” or dissonance between the results of qualitative and quantitative data. The protocol is intended to bring together the various themes from the qualitative and quantitative results and identify overarching meta-themes. 25 , 26

The “following a thread” technique is used in the analysis stage of the research process. To begin, each data source is analyzed to identify the most important themes that need further investigation. Subsequently, the research team selects 1 theme from 1 data source and follows it up in the other data source, thereby creating a thread. The individual steps of this technique are not specified. 26 , 27

A mixed-methods matrix is used at the end of the analysis. 26 All the data collected on a defined case are examined together in 1 large matrix, paying attention to cases rather than variables or themes. In a mixed-methods matrix (eg, a table), the rows represent the cases for which both qualitative and quantitative data exist. The columns show the findings for each case. This technique allows the research team to look for congruency, surprises, and paradoxes among the findings as well as patterns across multiple cases. In our review, we identified only one of these 3 approaches in the study by Roots and MacDonald. 20 These authors mentioned that a causal network analysis was performed using a matrix. However, no further details were given, and reference was made to a later publication. We could not find this publication.

Case Studies in Nursing Research and Recommendations

Because it focused on the implementation of NPs in primary health care, the setting of this scoping review was narrow. However, triangulation is essential for research in this area. This type of research was found to provide a good basis for understanding methodologic and data-analysis triangulation. Despite the lack of traceability in the description of the data and methodological triangulation, we believe that case studies are an appropriate design for exploring new nursing roles in existing health care systems. This is evidenced by the fact that case study research is widely used in many social science disciplines as well as in professional practice. 1 To strengthen this research method and increase the traceability in the research process, we recommend using the reporting guideline and reporting checklist by Rodgers et al. 9 This reporting checklist needs to be complemented with methodologic and data-analysis triangulation. A procedural approach needs to be followed in which each comparative step of the triangulation is documented. 25 A triangulation protocol or a mixed-methods matrix can be used for this purpose. 26 If there is a word limit in a publication, the triangulation protocol or mixed-methods matrix needs to be identified. A schematic representation of methodologic and data-analysis triangulation in case studies can be found in Figure 2 .

Schematic representation of methodologic and data-analysis triangulation in case studies (own work).

Limitations

This study suffered from several limitations that must be acknowledged. Given the nature of scoping reviews, we did not analyze the evidence reported in the studies. However, 2 reviewers independently reviewed all the full-text reports with respect to the inclusion criteria. The focus on the primary care setting with NPs (master’s degree) was very narrow, and only a few studies qualified. Thus, possible important methodological aspects that would have contributed to answering the questions were omitted. Studies describing the triangulation of 2 or more quantitative data-collection procedures could not be included in this scoping review due to the inclusion and exclusion criteria.

Conclusions

Given the various processes described for methodologic and data-analysis triangulation, we can conclude that triangulation in case studies is poorly standardized. Consequently, the traceability of the research process is not always given. Triangulation is complicated by the confusion of terminology. To advance case study research in nursing, we encourage authors to reflect critically on methodologic and data-analysis triangulation and use existing tools, such as the triangulation protocol or mixed-methods matrix and the reporting guideline checklist by Rodgers et al, 9 to ensure more transparent reporting.

Supplemental Material

Acknowledgments.

The authors thank Simona Aeschlimann for her support during the screening process.

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding: The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material: Supplemental material for this article is available online.

Research Writing and Analysis

- NVivo Group and Study Sessions

- SPSS This link opens in a new window

- Statistical Analysis Group sessions

- Using Qualtrics

- Dissertation and Data Analysis Group Sessions

- Defense Schedule - Commons Calendar This link opens in a new window

- Research Process Flow Chart

- Research Alignment Chapter 1 This link opens in a new window

- Step 1: Seek Out Evidence

- Step 2: Explain

- Step 3: The Big Picture

- Step 4: Own It

- Step 5: Illustrate

- Annotated Bibliography

- Seminal Authors

- Systematic Reviews & Meta-Analyses

- How to Synthesize and Analyze

- Synthesis and Analysis Practice

- Synthesis and Analysis Group Sessions

- Problem Statement

- Purpose Statement

- Conceptual Framework

- Theoretical Framework

- Locating Theoretical and Conceptual Frameworks This link opens in a new window

- Quantitative Research Questions

- Qualitative Research Questions

- Trustworthiness of Qualitative Data

- Analysis and Coding Example- Qualitative Data

- Thematic Data Analysis in Qualitative Design

- Dissertation to Journal Article This link opens in a new window

- International Journal of Online Graduate Education (IJOGE) This link opens in a new window

- Journal of Research in Innovative Teaching & Learning (JRIT&L) This link opens in a new window

Writing a Case Study

What is a case study?

A Case study is:

- An in-depth research design that primarily uses a qualitative methodology but sometimes includes quantitative methodology.

- Used to examine an identifiable problem confirmed through research.

- Used to investigate an individual, group of people, organization, or event.

- Used to mostly answer "how" and "why" questions.

What are the different types of case studies?

| Descriptive | This type of case study allows the researcher to: | How has the implementation and use of the instructional coaching intervention for elementary teachers impacted students’ attitudes toward reading? |

| Explanatory | This type of case study allows the researcher to: | Why do differences exist when implementing the same online reading curriculum in three elementary classrooms? |

| Exploratory | This type of case study allows the researcher to:

| What are potential barriers to student’s reading success when middle school teachers implement the Ready Reader curriculum online? |