- Engineering Mathematics

- Discrete Mathematics

- Operating System

- Computer Networks

- Digital Logic and Design

- C Programming

- Data Structures

- Theory of Computation

- Compiler Design

- Computer Org and Architecture

Static Single Assignment (with relevant examples)

Static Single Assignment was presented in 1988 by Barry K. Rosen, Mark N, Wegman, and F. Kenneth Zadeck.

In compiler design, Static Single Assignment ( shortened SSA) is a means of structuring the IR (intermediate representation) such that every variable is allotted a value only once and every variable is defined before it’s use. The prime use of SSA is it simplifies and improves the results of compiler optimisation algorithms, simultaneously by simplifying the variable properties. Some Algorithms improved by application of SSA –

- Constant Propagation – Translation of calculations from runtime to compile time. E.g. – the instruction v = 2*7+13 is treated like v = 27

- Value Range Propagation – Finding the possible range of values a calculation could result in.

- Dead Code Elimination – Removing the code which is not accessible and will have no effect on results whatsoever.

- Strength Reduction – Replacing computationally expensive calculations by inexpensive ones.

- Register Allocation – Optimising the use of registers for calculations.

Any code can be converted to SSA form by simply replacing the target variable of each code segment with a new variable and substituting each use of a variable with the new edition of the variable reaching that point. Versions are created by splitting the original variables existing in IR and are represented by original name with a subscript such that every variable gets its own version.

Example #1:

Convert the following code segment to SSA form:

Here x,y,z,s,p,q are original variables and x 2 , s 2 , s 3 , s 4 are versions of x and s.

Example #2:

Here a,b,c,d,e,q,s are original variables and a 2 , q 2 , q 3 are versions of a and q.

Phi function and SSA codes

The three address codes may also contain goto statements, and thus a variable may assume value from two different paths.

Consider the following example:-

Example #3:

When we try to convert the above three address code to SSA form, the output looks like:-

Attempt #3:

We need to be able to decide what value shall y take, out of x 1 and x 2 . We thus introduce the notion of phi functions, which resolves the correct value of the variable from two different computation paths due to branching.

Hence, the correct SSA codes for the example will be:-

Solution #3:

Thus, whenever a three address code has a branch and control may flow along two different paths, we need to use phi functions for appropriate addresses.

Please Login to comment...

Similar reads.

- 105 Funny Things to Do to Make Someone Laugh

- Best PS5 SSDs in 2024: Top Picks for Expanding Your Storage

- Best Nintendo Switch Controllers in 2024

- Xbox Game Pass Ultimate: Features, Benefits, and Pricing in 2024

- #geekstreak2024 – 21 Days POTD Challenge Powered By Deutsche Bank

Improve your Coding Skills with Practice

What kind of Experience do you want to share?

ENOSUCHBLOG

Programming, philosophy, pedaling., understanding static single assignment forms, oct 23, 2020 tags: llvm , programming .

This post is at least a year old.

With thanks to Niki Carroll , winny, and kurufu for their invaluable proofreading and advice.

By popular demand , I’m doing another LLVM post. This time, it’s single static assignment (or SSA) form, a common feature in the intermediate representations of optimizing compilers.

Like the last one , SSA is a topic in compiler and IR design that I mostly understand but could benefit from some self-guided education on. So here we are.

How to represent a program

At the highest level, a compiler’s job is singular: to turn some source language input into some machine language output . Internally, this breaks down into a sequence of clearly delineated 1 tasks:

- Lexing the source into a sequence of tokens

- Parsing the token stream into an abstract syntax tree , or AST 2

- Validating the AST (e.g., ensuring that all uses of identifiers are consistent with the source language’s scoping and definition rules) 3

- Translating the AST into machine code, with all of its complexities (instruction selection, register allocation, frame generation, &c)

In a single-pass compiler, (4) is monolithic: machine code is generated as the compiler walks the AST, with no revisiting of previously generated code. This is extremely fast (in terms of compiler performance) in exchange for some a few significant limitations:

Optimization potential: because machine code is generated in a single pass, it can’t be revisited for optimizations. Single-pass compilers tend to generate extremely slow and conservative machine code.

By way of example: the System V ABI (used by Linux and macOS) defines a special 128-byte region beyond the current stack pointer ( %rsp ) that can be used by leaf functions whose stack frames fit within it. This, in turn, saves a few stack management instructions in the function prologue and epilogue.

A single-pass compiler will struggle to take advantage of this ABI-supplied optimization: it needs to emit a stack slot for each automatic variable as they’re visited, and cannot revisit its function prologue for erasure if all variables fit within the red zone.

Language limitations: single-pass compilers struggle with common language design decisions, like allowing use of identifiers before their declaration or definition. For example, the following is valid C++:

| Rect { public: int area() { return width() * height(); } int width() { return 5; } int height() { return 5; } }; |

C and C++ generally require pre-declaration and/or definition for identifiers, but member function bodies may reference the entire class scope. This will frustrate a single-pass compiler, which expects Rect::width and Rect::height to already exist in some symbol lookup table for call generation.

Consequently, (virtually) all modern compilers are multi-pass .

Multi-pass compilers break the translation phase down even more:

- The AST is lowered into an intermediate representation , or IR

- Analyses (or passes) are performed on the IR, refining it according to some optimization profile (code size, performance, &c)

- The IR is either translated to machine code or lowered to another IR, for further target specialization or optimization 4

So, we want an IR that’s easy to correctly transform and that’s amenable to optimization. Let’s talk about why IRs that have the static single assignment property fill that niche.

At its core, the SSA form of any program source program introduces only one new constraint: all variables are assigned (i.e., stored to) exactly once .

By way of example: the following (not actually very helpful) function is not in a valid SSA form with respect to the flags variable:

| helpful_open(char *fname) { int flags = O_RDWR; if (!access(fname, F_OK)) { flags |= O_CREAT; } int fd = open(fname, flags, 0644); return fd; } |

Why? Because flags is stored to twice: once for initialization, and (potentially) again inside the conditional body.

As programmers, we could rewrite helpful_open to only ever store once to each automatic variable:

| helpful_open(char *fname) { if (!access(fname, F_OK)) { int flags = O_RDWR | O_CREAT; return open(fname, flags, 0644); } else { int flags = O_RDWR; return open(fname, flags, 0644); } } |

But this is clumsy and repetitive: we essentially need to duplicate every chain of uses that follow any variable that is stored to more than once. That’s not great for readability, maintainability, or code size.

So, we do what we always do: make the compiler do the hard work for us. Fortunately there exists a transformation from every valid program into an equivalent SSA form, conditioned on two simple rules.

Rule #1: Whenever we see a store to an already-stored variable, we replace it with a brand new “version” of that variable.

Using rule #1 and the example above, we can rewrite flags using _N suffixes to indicate versions:

| helpful_open(char *fname) { int flags_0 = O_RDWR; // Declared up here to avoid dealing with C scopes. int flags_1; if (!access(fname, F_OK)) { flags_1 = flags_0 | O_CREAT; } int fd = open(fname, flags_1, 0644); return fd; } |

But wait a second: we’ve made a mistake!

- open(..., flags_1, ...) is incorrect: it unconditionally assigns O_CREAT , which wasn’t in the original function semantics.

- open(..., flags_0, ...) is also incorrect: it never assigns O_CREAT , and thus is wrong for the same reason.

So, what do we do? We use rule 2!

Rule #2: Whenever we need to choose a variable based on control flow, we use the Phi function (φ) to introduce a new variable based on our choice.

Using our example once more:

| helpful_open(char *fname) { int flags_0 = O_RDWR; // Declared up here to avoid dealing with C scopes. int flags_1; if (!access(fname, F_OK)) { flags_1 = flags_0 | O_CREAT; } int flags_2 = φ(flags_0, flags_1); int fd = open(fname, flags_2, 0644); return fd; } |

Our quandary is resolved: open always takes flags_2 , where flags_2 is a fresh SSA variable produced applying φ to flags_0 and flags_1 .

Observe, too, that φ is a symbolic function: compilers that use SSA forms internally do not emit real φ functions in generated code 5 . φ exists solely to reconcile rule #1 with the existence of control flow.

As such, it’s a little bit silly to talk about SSA forms with C examples (since C and other high-level languages are what we’re translating from in the first place). Let’s dive into how LLVM’s IR actually represents them.

SSA in LLVM

First of all, let’s see what happens when we run our very first helpful_open through clang with no optimizations:

| dso_local i32 @helpful_open(i8* %fname) #0 { entry: %fname.addr = alloca i8*, align 8 %flags = alloca i32, align 4 %fd = alloca i32, align 4 store i8* %fname, i8** %fname.addr, align 8 store i32 2, i32* %flags, align 4 %0 = load i8*, i8** %fname.addr, align 8 %call = call i32 @access(i8* %0, i32 0) #4 %tobool = icmp ne i32 %call, 0 br i1 %tobool, label %if.end, label %if.then if.then: ; preds = %entry %1 = load i32, i32* %flags, align 4 %or = or i32 %1, 64 store i32 %or, i32* %flags, align 4 br label %if.end if.end: ; preds = %if.then, %entry %2 = load i8*, i8** %fname.addr, align 8 %3 = load i32, i32* %flags, align 4 %call1 = call i32 (i8*, i32, ...) @open(i8* %2, i32 %3, i32 420) store i32 %call1, i32* %fd, align 4 %4 = load i32, i32* %fd, align 4 ret i32 %4 } |

(View it on Godbolt .)

So, we call open with %3 , which comes from…a load from an i32* named %flags ? Where’s the φ?

This is something that consistently slips me up when reading LLVM’s IR: only values , not memory, are in SSA form. Because we’ve compiled with optimizations disabled, %flags is just a stack slot that we can store into as many times as we please, and that’s exactly what LLVM has elected to do above.

As such, LLVM’s SSA-based optimizations aren’t all that useful when passed IR that makes direct use of stack slots. We want to maximize our use of SSA variables, whenever possible, to make future optimization passes as effective as possible.

This is where mem2reg comes in:

This file (optimization pass) promotes memory references to be register references. It promotes alloca instructions which only have loads and stores as uses. An alloca is transformed by using dominator frontiers to place phi nodes, then traversing the function in depth-first order to rewrite loads and stores as appropriate. This is just the standard SSA construction algorithm to construct “pruned” SSA form.

(Parenthetical mine.)

mem2reg gets run at -O1 and higher, so let’s do exactly that:

| dso_local i32 @helpful_open(i8* nocapture readonly %fname) local_unnamed_addr #0 { entry: %call = call i32 @access(i8* %fname, i32 0) #4 %tobool.not = icmp eq i32 %call, 0 %spec.select = select i1 %tobool.not, i32 66, i32 2 %call1 = call i32 (i8*, i32, ...) @open(i8* %fname, i32 %spec.select, i32 420) #4, !dbg !22 ret i32 %call1, !dbg !23 } |

Foiled again! Our stack slots are gone thanks to mem2reg , but LLVM has actually optimized too far : it figured out that our flags value is wholly dependent on the return value of our access call and erased the conditional entirely.

Instead of a φ node, we got this select :

| = select i1 %tobool.not, i32 66, i32 2 |

which the LLVM Language Reference describes concisely:

The ‘select’ instruction is used to choose one value based on a condition, without IR-level branching.

So we need a better example. Let’s do something that LLVM can’t trivially optimize into a select (or sequence of select s), like adding an else if with a function that we’ve only provided the declaration for:

| filesize(char *); int helpful_open(char *fname) { int flags = O_RDWR; if (!access(fname, F_OK)) { flags |= O_CREAT; } else if (filesize(fname) > 0) { flags |= O_TRUNC; } int fd = open(fname, flags, 0644); return fd; } |

| dso_local i32 @helpful_open(i8* %fname) local_unnamed_addr #0 { entry: %call = call i32 @access(i8* %fname, i32 0) #5 %tobool.not = icmp eq i32 %call, 0 br i1 %tobool.not, label %if.end4, label %if.else if.else: ; preds = %entry %call1 = call i64 @filesize(i8* %fname) #5 %cmp.not = icmp eq i64 %call1, 0 %spec.select = select i1 %cmp.not, i32 2, i32 514 br label %if.end4 if.end4: ; preds = %if.else, %entry %flags.0 = phi i32 [ 66, %entry ], [ %spec.select, %if.else ] %call5 = call i32 (i8*, i32, ...) @open(i8* %fname, i32 %flags.0, i32 420) #5 ret i32 %call5 } |

That’s more like it! Here’s our magical φ:

| = phi i32 [ 66, %entry ], [ %spec.select, %if.else ] |

LLVM’s phi is slightly more complicated than the φ(flags_0, flags_1) that I made up before, but not by much: it takes a list of pairs (two, in this case), with each pair containing a possible value and that value’s originating basic block (which, by construction, is always a predecessor block in the context of the φ node).

The Language Reference backs us up:

The type of the incoming values is specified with the first type field. After this, the ‘phi’ instruction takes a list of pairs as arguments, with one pair for each predecessor basic block of the current block. Only values of first class type may be used as the value arguments to the PHI node. Only labels may be used as the label arguments. There must be no non-phi instructions between the start of a basic block and the PHI instructions: i.e. PHI instructions must be first in a basic block.

Observe, too, that LLVM is still being clever: one of our φ choices is a computed select ( %spec.select ), so LLVM still managed to partially erase the original control flow.

So that’s cool. But there’s a piece of control flow that we’ve conspicuously ignored.

What about loops?

| do_math(int count, int base) { for (int i = 0; i < count; i++) { base += base; } return base; } |

| dso_local i32 @do_math(i32 %count, i32 %base) local_unnamed_addr #0 { entry: %cmp5 = icmp sgt i32 %count, 0 br i1 %cmp5, label %for.body, label %for.cond.cleanup for.cond.cleanup: ; preds = %for.body, %entry %base.addr.0.lcssa = phi i32 [ %base, %entry ], [ %add, %for.body ] ret i32 %base.addr.0.lcssa for.body: ; preds = %entry, %for.body %i.07 = phi i32 [ %inc, %for.body ], [ 0, %entry ] %base.addr.06 = phi i32 [ %add, %for.body ], [ %base, %entry ] %add = shl nsw i32 %base.addr.06, 1 %inc = add nuw nsw i32 %i.07, 1 %exitcond.not = icmp eq i32 %inc, %count br i1 %exitcond.not, label %for.cond.cleanup, label %for.body, !llvm.loop !26 } |

Not one, not two, but three φs! In order of appearance:

Because we supply the loop bounds via count , LLVM has no way to ensure that we actually enter the loop body. Consequently, our very first φ selects between the initial %base and %add . LLVM’s phi syntax helpfully tells us that %base comes from the entry block and %add from the loop, just as we expect. I have no idea why LLVM selected such a hideous name for the resulting value ( %base.addr.0.lcssa ).

Our index variable is initialized once and then updated with each for iteration, so it also needs a φ. Our selections here are %inc (which each body computes from %i.07 ) and the 0 literal (i.e., our initialization value).

Finally, the heart of our loop body: we need to get base , where base is either the initial base value ( %base ) or the value computed as part of the prior loop ( %add ). One last φ gets us there.

The rest of the IR is bookkeeping: we need separate SSA variables to compute the addition ( %add ), increment ( %inc ), and exit check ( %exitcond.not ) with each loop iteration.

So now we know what an SSA form is , and how LLVM represents them 6 . Why should we care?

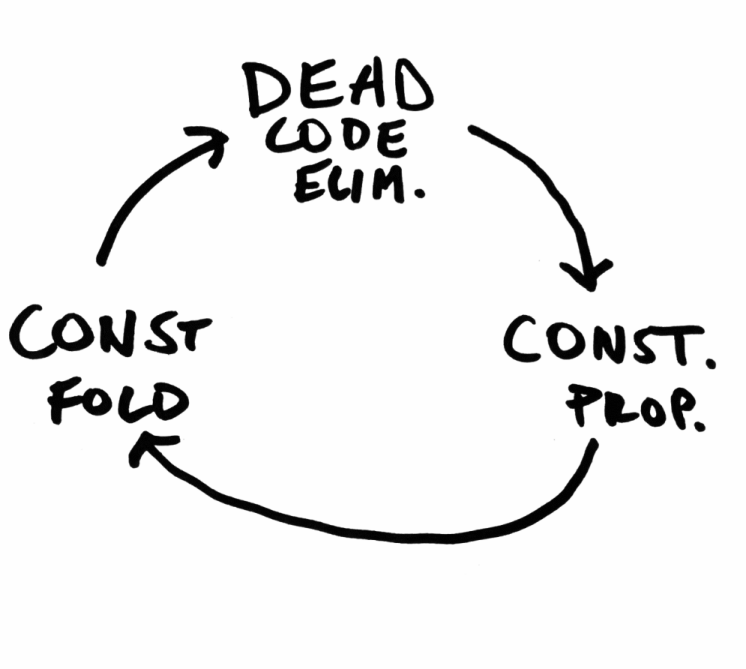

As I briefly alluded to early in the post, it comes down to optimization potential: the SSA forms of programs are particularly suited to a number of effective optimizations.

Let’s go through a select few of them.

Dead code elimination

One of the simplest things that an optimizing compiler can do is remove code that cannot possibly be executed . This makes the resulting binary smaller (and usually faster, since more of it can fit in the instruction cache).

“Dead” code falls into several categories 7 , but a common one is assignments that cannot affect program behavior, like redundant initialization:

| main(void) { int x = 100; if (rand() % 2) { x = 200; } else if (rand() % 2) { x = 300; } else { x = 400; } return x; } |

Without an SSA form, an optimizing compiler would need to check whether any use of x reaches its original definition ( x = 100 ). Tedious. In SSA form, the impossibility of that is obvious:

| main(void) { int x_0 = 100; // Just ignore the scoping. Computers aren't real life. if (rand() % 2) { int x_1 = 200; } else if (rand() % 2) { int x_2 = 300; } else { int x_3 = 400; } return φ(x_1, x_2, x_3); } |

And sure enough, LLVM eliminates the initial assignment of 100 entirely:

| dso_local i32 @main() local_unnamed_addr #0 { entry: %call = call i32 @rand() #3 %0 = and i32 %call, 1 %tobool.not = icmp eq i32 %0, 0 br i1 %tobool.not, label %if.else, label %if.end6 if.else: ; preds = %entry %call1 = call i32 @rand() #3 %1 = and i32 %call1, 1 %tobool3.not = icmp eq i32 %1, 0 %. = select i1 %tobool3.not, i32 400, i32 300 br label %if.end6 if.end6: ; preds = %if.else, %entry %x.0 = phi i32 [ 200, %entry ], [ %., %if.else ] ret i32 %x.0 } |

Constant propagation

Compilers can also optimize a program by substituting uses of a constant variable for the constant value itself. Let’s take a look at another blob of C:

| some_math(int x) { int y = 7; int z = 10; int a; if (rand() % 2) { a = y + z; } else if (rand() % 2) { a = y + z; } else { a = y - z; } return x + a; } |

As humans, we can see that y and z are trivially assigned and never modified 8 . For the compiler, however, this is a variant of the reaching definition problem from above: before it can replace y and z with 7 and 10 respectively, it needs to make sure that y and z are never assigned a different value.

Let’s do our SSA reduction:

| some_math(int x) { int y_0 = 7; int z_0 = 10; int a_0; if (rand() % 2) { int a_1 = y_0 + z_0; } else if (rand() % 2) { int a_2 = y_0 + z_0; } else { int a_3 = y_0 - z_0; } int a_4 = φ(a_1, a_2, a_3); return x + a_4; } |

This is virtually identical to our original form, but with one critical difference: the compiler can now see that every load of y and z is the original assignment. In other words, they’re all safe to replace!

| some_math(int x) { int y = 7; int z = 10; int a_0; if (rand() % 2) { int a_1 = 7 + 10; } else if (rand() % 2) { int a_2 = 7 + 10; } else { int a_3 = 7 - 10; } int a_4 = φ(a_1, a_2, a_3); return x + a_4; } |

So we’ve gotten rid of a few potential register operations, which is nice. But here’s the really critical part: we’ve set ourselves up for several other optimizations :

Now that we’ve propagated some of our constants, we can do some trivial constant folding : 7 + 10 becomes 17 , and so forth.

In SSA form, it’s trivial to observe that only x and a_{1..4} can affect the program’s behavior. So we can apply our dead code elimination from above and delete y and z entirely!

This is the real magic of an optimizing compiler: each individual optimization is simple and largely independent, but together they produce a virtuous cycle that can be repeated until gains diminish.

Register allocation

Register allocation (alternatively: register scheduling) is less of an optimization itself , and more of an unavoidable problem in compiler engineering: it’s fun to pretend to have access to an infinite number of addressable variables, but the compiler eventually insists that we boil our operations down to a small, fixed set of CPU registers .

The constraints and complexities of register allocation vary by architecture: x86 (prior to AMD64) is notoriously starved for registers 9 (only 8 full general purpose registers, of which 6 might be usable within a function’s scope 10 ), while RISC architectures typically employ larger numbers of registers to compensate for the lack of register-memory operations.

Just as above, reductions to SSA form have both indirect and direct advantages for the register allocator:

Indirectly: Eliminations of redundant loads and stores reduces the overall pressure on the register allocator, allowing it to avoid expensive spills (i.e., having to temporarily transfer a live register to main memory to accommodate another instruction).

Directly: Compilers have historically lowered φs into copies before register allocation, meaning that register allocators traditionally haven’t benefited from the SSA form itself 11 . There is, however, (semi-)recent research on direct application of SSA forms to both linear and coloring allocators 12 13 .

A concrete example: modern JavaScript engines use JITs to accelerate program evaluation. These JITs frequently use linear register allocators for their acceptable tradeoff between register selection speed (linear, as the name suggests) and acceptable register scheduling. Converting out of SSA form is a timely operation of its own, so linear allocation on the SSA representation itself is appealing in JITs and other contexts where compile time is part of execution time.

There are many things about SSA that I didn’t cover in this post: dominance frontiers , tradeoffs between “pruned” and less optimal SSA forms, and feedback mechanisms between the SSA form of a program and the compiler’s decision to cease optimizing, among others. Each of these could be its own blog post, and maybe will be in the future!

In the sense that each task is conceptually isolated and has well-defined inputs and outputs. Individual compilers have some flexibility with respect to whether they combine or further split the tasks. ↩

The distinction between an AST and an intermediate representation is hazy: Rust converts their AST to HIR early in the compilation process, and languages can be designed to have ASTs that are amendable to analyses that would otherwise be best on an IR. ↩

This can be broken up into lexical validation (e.g. use of an undeclared identifier) and semantic validation (e.g. incorrect initialization of a type). ↩

This is what LLVM does: LLVM IR is lowered to MIR (not to be confused with Rust’s MIR ), which is subsequently lowered to machine code. ↩

Not because they can’t: the SSA form of a program can be executed by evaluating φ with concrete control flow. ↩

We haven’t talked at all about minimal or pruned SSAs, and I don’t plan on doing so in this post. The TL;DR of them: naïve SSA form generation can lead to lots of unnecessary φ nodes, impeding analyses. LLVM (and GCC, and anything else that uses SSAs probably) will attempt to translate any initial SSA form into one with a minimally viable number of φs. For LLVM, this tied directly to the rest of mem2reg . ↩

Including removing code that has undefined behavior in it, since “doesn’t run at all” is a valid consequence of invoking UB. ↩

And are also function scoped, meaning that another translation unit can’t address them. ↩

x86 makes up for this by not being a load-store architecture : many instructions can pay the price of a memory round-trip in exchange for saving a register. ↩

Assuming that %esp and %ebp are being used by the compiler to manage the function’s frame. ↩

LLVM, for example, lowers all φs as one of its very first preparations for register allocation. See this 2009 LLVM Developers’ Meeting talk . ↩

Wimmer 2010a: “Linear Scan Register Allocation on SSA Form” ( PDF ) ↩

Hack 2005: “Towards Register Allocation for Programs in SSA-form” ( PDF ) ↩

Next: Alias analysis , Previous: SSA Operands , Up: Analysis and Optimization of GIMPLE tuples [ Contents ][ Index ]

13.3 Static Single Assignment ¶

Most of the tree optimizers rely on the data flow information provided by the Static Single Assignment (SSA) form. We implement the SSA form as described in R. Cytron, J. Ferrante, B. Rosen, M. Wegman, and K. Zadeck. Efficiently Computing Static Single Assignment Form and the Control Dependence Graph. ACM Transactions on Programming Languages and Systems, 13(4):451-490, October 1991 .

The SSA form is based on the premise that program variables are assigned in exactly one location in the program. Multiple assignments to the same variable create new versions of that variable. Naturally, actual programs are seldom in SSA form initially because variables tend to be assigned multiple times. The compiler modifies the program representation so that every time a variable is assigned in the code, a new version of the variable is created. Different versions of the same variable are distinguished by subscripting the variable name with its version number. Variables used in the right-hand side of expressions are renamed so that their version number matches that of the most recent assignment.

We represent variable versions using SSA_NAME nodes. The renaming process in tree-ssa.cc wraps every real and virtual operand with an SSA_NAME node which contains the version number and the statement that created the SSA_NAME . Only definitions and virtual definitions may create new SSA_NAME nodes.

Sometimes, flow of control makes it impossible to determine the most recent version of a variable. In these cases, the compiler inserts an artificial definition for that variable called PHI function or PHI node . This new definition merges all the incoming versions of the variable to create a new name for it. For instance,

Since it is not possible to determine which of the three branches will be taken at runtime, we don’t know which of a_1 , a_2 or a_3 to use at the return statement. So, the SSA renamer creates a new version a_4 which is assigned the result of “merging” a_1 , a_2 and a_3 . Hence, PHI nodes mean “one of these operands. I don’t know which”.

The following functions can be used to examine PHI nodes

Returns the SSA_NAME created by PHI node phi (i.e., phi ’s LHS).

Returns the number of arguments in phi . This number is exactly the number of incoming edges to the basic block holding phi .

Returns i th argument of phi .

Returns the incoming edge for the i th argument of phi .

Returns the SSA_NAME for the i th argument of phi .

- Preserving the SSA form

- Examining SSA_NAME nodes

- Walking the dominator tree

13.3.1 Preserving the SSA form ¶

Some optimization passes make changes to the function that invalidate the SSA property. This can happen when a pass has added new symbols or changed the program so that variables that were previously aliased aren’t anymore. Whenever something like this happens, the affected symbols must be renamed into SSA form again. Transformations that emit new code or replicate existing statements will also need to update the SSA form.

Since GCC implements two different SSA forms for register and virtual variables, keeping the SSA form up to date depends on whether you are updating register or virtual names. In both cases, the general idea behind incremental SSA updates is similar: when new SSA names are created, they typically are meant to replace other existing names in the program.

For instance, given the following code:

Suppose that we insert new names x_10 and x_11 (lines 4 and 8 ).

We want to replace all the uses of x_1 with the new definitions of x_10 and x_11 . Note that the only uses that should be replaced are those at lines 5 , 9 and 11 . Also, the use of x_7 at line 9 should not be replaced (this is why we cannot just mark symbol x for renaming).

Additionally, we may need to insert a PHI node at line 11 because that is a merge point for x_10 and x_11 . So the use of x_1 at line 11 will be replaced with the new PHI node. The insertion of PHI nodes is optional. They are not strictly necessary to preserve the SSA form, and depending on what the caller inserted, they may not even be useful for the optimizers.

Updating the SSA form is a two step process. First, the pass has to identify which names need to be updated and/or which symbols need to be renamed into SSA form for the first time. When new names are introduced to replace existing names in the program, the mapping between the old and the new names are registered by calling register_new_name_mapping (note that if your pass creates new code by duplicating basic blocks, the call to tree_duplicate_bb will set up the necessary mappings automatically).

After the replacement mappings have been registered and new symbols marked for renaming, a call to update_ssa makes the registered changes. This can be done with an explicit call or by creating TODO flags in the tree_opt_pass structure for your pass. There are several TODO flags that control the behavior of update_ssa :

- TODO_update_ssa . Update the SSA form inserting PHI nodes for newly exposed symbols and virtual names marked for updating. When updating real names, only insert PHI nodes for a real name O_j in blocks reached by all the new and old definitions for O_j . If the iterated dominance frontier for O_j is not pruned, we may end up inserting PHI nodes in blocks that have one or more edges with no incoming definition for O_j . This would lead to uninitialized warnings for O_j ’s symbol.

- TODO_update_ssa_no_phi . Update the SSA form without inserting any new PHI nodes at all. This is used by passes that have either inserted all the PHI nodes themselves or passes that need only to patch use-def and def-def chains for virtuals (e.g., DCE).

WARNING: If you need to use this flag, chances are that your pass may be doing something wrong. Inserting PHI nodes for an old name where not all edges carry a new replacement may lead to silent codegen errors or spurious uninitialized warnings.

- TODO_update_ssa_only_virtuals . Passes that update the SSA form on their own may want to delegate the updating of virtual names to the generic updater. Since FUD chains are easier to maintain, this simplifies the work they need to do. NOTE: If this flag is used, any OLD->NEW mappings for real names are explicitly destroyed and only the symbols marked for renaming are processed.

13.3.2 Examining SSA_NAME nodes ¶

The following macros can be used to examine SSA_NAME nodes

Returns the statement s that creates the SSA_NAME var . If s is an empty statement (i.e., IS_EMPTY_STMT ( s ) returns true ), it means that the first reference to this variable is a USE or a VUSE.

Returns the version number of the SSA_NAME object var .

13.3.3 Walking the dominator tree ¶

This function walks the dominator tree for the current CFG calling a set of callback functions defined in struct dom_walk_data in domwalk.h . The call back functions you need to define give you hooks to execute custom code at various points during traversal:

- Once to initialize any local data needed while processing bb and its children. This local data is pushed into an internal stack which is automatically pushed and popped as the walker traverses the dominator tree.

- Once before traversing all the statements in the bb .

- Once for every statement inside bb .

- Once after traversing all the statements and before recursing into bb ’s dominator children.

- It then recurses into all the dominator children of bb .

- After recursing into all the dominator children of bb it can, optionally, traverse every statement in bb again (i.e., repeating steps 2 and 3).

- Once after walking the statements in bb and bb ’s dominator children. At this stage, the block local data stack is popped.

Lesson 5: Global Analysis & SSA

- global analysis & optimization

- static single assignment

- SSA slides from Todd Mowry at CMU another presentation of the pseudocode for various algorithms herein

- Revisiting Out-of-SSA Translation for Correctness, Code Quality, and Efficiency by Boissinot on more sophisticated was to translate out of SSA form

- tasks due October 7

Lots of definitions!

- Reminders: Successors & predecessors. Paths in CFGs.

- A dominates B iff all paths from the entry to B include A .

- The dominator tree is a convenient data structure for storing the dominance relationships in an entire function. The recursive children of a given node in a tree are the nodes that that node dominates.

- A strictly dominates B iff A dominates B and A ≠ B . (Dominance is reflexive, so "strict" dominance just takes that part away.)

- A immediately dominates B iff A dominates B but A does not strictly dominate any other node that strictly dominates B . (In which case A is B 's direct parent in the dominator tree.)

- A dominance frontier is the set of nodes that are just "one edge away" from being dominated by a given node. Put differently, A 's dominance frontier contains B iff A does not strictly dominate B , but A does dominate some predecessor of B .

- Post-dominance is the reverse of dominance. A post-dominates B iff all paths from B to the exit include A . (You can extend the strict version, the immediate version, trees, etc. to post-dominance.)

An algorithm for finding dominators:

The dom relation will, in the end, map each block to its set of dominators. We initialize it as the "complete" relation, i.e., mapping every block to the set of all blocks. The loop pares down the sets by iterating to convergence.

The running time is O(n²) in the worst case. But there's a trick: if you iterate over the CFG in reverse post-order , and the CFG is well behaved (reducible), it runs in linear time—the outer loop runs a constant number of times.

Natural Loops

Some things about loops:

- Natural loops are strongly connected components in the CFG with a single entry.

- Natural loops are formed around backedges , which are edges from A to B where B dominates A .

- A natural loop is the smallest set of vertices L including A and B such that, for every v in L , either all the predecessors of v are in L or v = B .

- A language that only has for , while , if , break , continue , etc. can only generate reducible CFGs. You need goto or something to generate irreducible CFGs.

Loop-Invariant Code Motion (LICM)

And finally, loop-invariant code motion (LICM) is an optimization that works on natural loops. It moves code from inside a loop to before the loop, if the computation always does the same thing on every iteration of the loop.

A loop's preheader is its header's unique predecessor. LICM moves code to the preheader. But while natural loops need to have a unique header, the header does not necessarily have a unique predecessor. So it's often convenient to invent an empty preheader block that jumps directly to the header, and then move all the in-edges to the header to point there instead.

LICM needs two ingredients: identifying loop-invariant instructions in the loop body, and deciding when it's safe to move one from the body to the preheader.

To identify loop-invariant instructions:

(This determination requires that you already calculated reaching definitions! Presumably using data flow.)

It's safe to move a loop-invariant instruction to the preheader iff:

- The definition dominates all of its uses, and

- No other definitions of the same variable exist in the loop, and

- The instruction dominates all loop exits.

The last criterion is somewhat tricky: it ensures that the computation would have been computed eventually anyway, so it's safe to just do it earlier. But it's not true of loops that may execute zero times, which, when you think about it, rules out most for loops! It's possible to relax this condition if:

- The assigned-to variable is dead after the loop, and

- The instruction can't have side effects, including exceptions—generally ruling out division because it might divide by zero. (A thing that you generally need to be careful of in such speculative optimizations that do computations that might not actually be necessary.)

Static Single Assignment (SSA)

You have undoubtedly noticed by now that many of the annoying problems in implementing analyses & optimizations stem from variable name conflicts. Wouldn't it be nice if every assignment in a program used a unique variable name? Of course, people don't write programs that way, so we're out of luck. Right?

Wrong! Many compilers convert programs into static single assignment (SSA) form, which does exactly what it says: it ensures that, globally, every variable has exactly one static assignment location. (Of course, that statement might be executed multiple times, which is why it's not dynamic single assignment.) In Bril terms, we convert a program like this:

Into a program like this, by renaming all the variables:

Of course, things will get a little more complicated when there is control flow. And because real machines are not SSA, using separate variables (i.e., memory locations and registers) for everything is bound to be inefficient. The idea in SSA is to convert general programs into SSA form, do all our optimization there, and then convert back to a standard mutating form before we generate backend code.

Just renaming assignments willy-nilly will quickly run into problems. Consider this program:

If we start renaming all the occurrences of a , everything goes fine until we try to write that last print a . Which "version" of a should it use?

To match the expressiveness of unrestricted programs, SSA adds a new kind of instruction: a ϕ-node . ϕ-nodes are flow-sensitive copy instructions: they get a value from one of several variables, depending on which incoming CFG edge was most recently taken to get to them.

In Bril, a ϕ-node appears as a phi instruction:

The phi instruction chooses between any number of variables, and it picks between them based on labels. If the program most recently executed a basic block with the given label, then the phi instruction takes its value from the corresponding variable.

You can write the above program in SSA like this:

It can also be useful to see how ϕ-nodes crop up in loops.

(An aside: some recent SSA-form IRs, such as MLIR and Swift's IR , use an alternative to ϕ-nodes called basic block arguments . Instead of making ϕ-nodes look like weird instructions, these IRs bake the need for ϕ-like conditional copies into the structure of the CFG. Basic blocks have named parameters, and whenever you jump to a block, you must provide arguments for those parameters. With ϕ-nodes, a basic block enumerates all the possible sources for a given variable, one for each in-edge in the CFG; with basic block arguments, the sources are distributed to the "other end" of the CFG edge. Basic block arguments are a nice alternative for "SSA-native" IRs because they avoid messy problems that arise when needing to treat ϕ-nodes differently from every other kind of instruction.)

Bril in SSA

Bril has an SSA extension . It adds support for a phi instruction. Beyond that, SSA form is just a restriction on the normal expressiveness of Bril—if you solemnly promise never to assign statically to the same variable twice, you are writing "SSA Bril."

The reference interpreter has built-in support for phi , so you can execute your SSA-form Bril programs without fuss.

The SSA Philosophy

In addition to a language form, SSA is also a philosophy! It can fundamentally change the way you think about programs. In the SSA philosophy:

- definitions == variables

- instructions == values

- arguments == data flow graph edges

In LLVM, for example, instructions do not refer to argument variables by name—an argument is a pointer to defining instruction.

Converting to SSA

To convert to SSA, we want to insert ϕ-nodes whenever there are distinct paths containing distinct definitions of a variable. We don't need ϕ-nodes in places that are dominated by a definition of the variable. So what's a way to know when control reachable from a definition is not dominated by that definition? The dominance frontier!

We do it in two steps. First, insert ϕ-nodes:

Then, rename variables:

Converting from SSA

Eventually, we need to convert out of SSA form to generate efficient code for real machines that don't have phi -nodes and do have finite space for variable storage.

The basic algorithm is pretty straightforward. If you have a ϕ-node:

Then there must be assignments to x and y (recursively) preceding this statement in the CFG. The paths from x to the phi -containing block and from y to the same block must "converge" at that block. So insert code into the phi -containing block's immediate predecessors along each of those two paths: one that does v = id x and one that does v = id y . Then you can delete the phi instruction.

This basic approach can introduce some redundant copying. (Take a look at the code it generates after you implement it!) Non-SSA copy propagation optimization can work well as a post-processing step. For a more extensive take on how to translate out of SSA efficiently, see “Revisiting Out-of-SSA Translation for Correctness, Code Quality, and Efficiency” by Boissinot et al.

- Find dominators for a function.

- Construct the dominance tree.

- Compute the dominance frontier.

- One thing to watch out for: a tricky part of the translation from the pseudocode to the real world is dealing with variables that are undefined along some paths.

- You will want to make sure the output of your "to SSA" pass is actually in SSA form. There's a really simple is_ssa.py script that can check that for you.

- You'll also want to make sure that programs do the same thing when converted to SSA form and back again. Fortunately, brili supports the phi instruction, so you can interpret your SSA-form programs if you want to check the midpoint of that round trip.

- For bonus "points," implement global value numbering for SSA-form Bril code.

Intermediate Representations in Compilers: Static Single Assignment (SSA)

Compilers are program translators – they translate program from one language to another while preserving the semantics. Usually the source language is higher level programming language like C++ or Java and the target language is machine level language like assembly or virtual machine language like JVM. Although it doesn’t have to be like this, target language is almost always at lower level than the source language.

To do such a transformation, it helps to have what are called intermediate representations or IRs of the program. Instead of translating in one single go to the target language, translation happens progressively between these representations. This process is also called lowering because we’re converting from higher level representations to progressively lower level representations. Some recognizable intermediate representations for imperative languages include abstract syntax tree , control flow graph and static single assignment (SSA) form. For lisp-like/functional languages, continuation passing style (CPS) is a popular intermediate representation.

In this series of posts, we’ll explain how SSA and CPS representations work. We will then show how these are pretty much the same representations. This series is inspired by Delphine Demange ’s PhD thesis on semantics of intermediate representations .

Static Single Assignment (SSA) Form

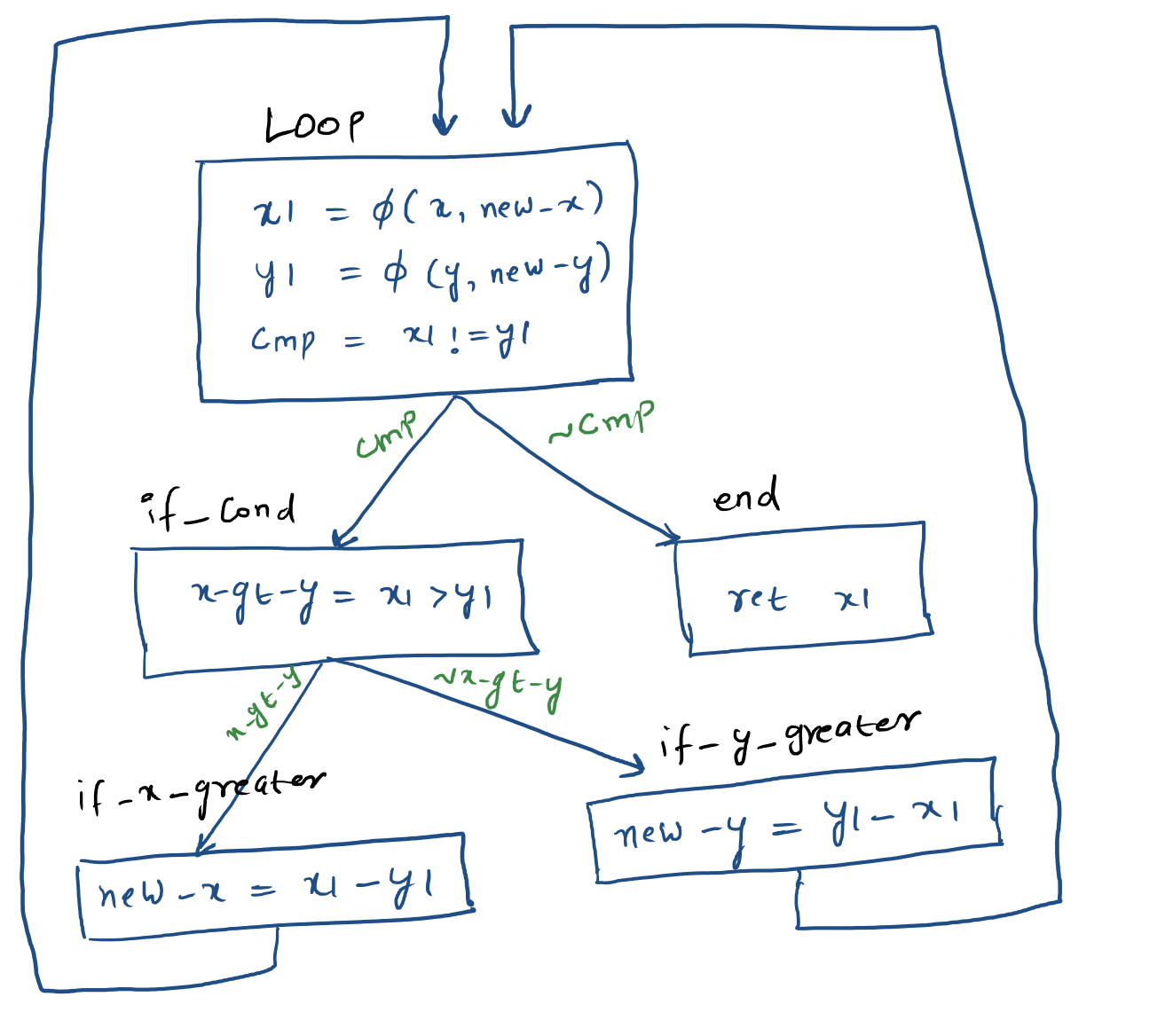

Static single assignment (SSA) is one of the most popular intermediate representations out there. Stalwarts of compiler toolchains, both GCC and LLVM, use this form as IR for multiple languages. SSA introduces the restriction that each variable is assigned only once and never change the values. If a variable is modified in the original program, a new variable is introduced instead of modifying the old one. This IR is designed to be easily optimizable and neutral between source languages. To give you an idea, here’s how SSA for GCD program looks like:

We will use LLVM IR to illustrate the SSA form. In the process, we should be able to learn how LLVM is generated for different constructs!

Let’s examine LLVM IR for the following simple C program:

To compile this to LLVM IR, you can run the following command: clang -S -emit-llvm -O3 ssa.c . I’ll simplify this representation manually to make it easy to understand.

LLVM IR is typed: that’s the reason why we have i32 next to instruction add to indicate we’re operating on 32 bit integers. To run this LLVM code, let’s create a simple runtime function:

Compile everything together and run resulting executable:

Variable Modification

That was straight forward. Let’s see how LLVM IR for this function will look like:

In this function, x and y are being modified. So we’ll need to create temporary variables to hold modified values. The C program above will get transformed to something like this:

Compile and verify that we get expected result:

If you were to do something like this, where x is modified:

You’ll get an error:

Conditionals

What happens if there’s a conditional block? Following a conditional, a variable could have either value. To indicate that final value is one of the above, we use something called as phi function. Consider the following function:

Its LLVM IR will look something like this:

Note the phi instruction in the end block that indicates that variable %z is one of %add , %add1 and %sub2 from blocks %if_gt , %if_lt and %if_else respectively

Let’s see how loops get translated in SSA. It should be not too different from conditionals because loops also use conditional branches. Here’s the function to compute GCD:

Its LLVM transformation will look something like this:

From this it should be clear that single assignment is a static property of the program text and not the dynamic property of program execution. This should make it clear why there’s ‘static’ in the name of static single assignment form. You can see the pictorial form of this code at the top of the post.

This post should give a good taste on what SSA is and how it works. In the next post, let’s examine what continuation passing style is and how it works.

References:

- Douglas Thain, Introduction to Compilers and Language Design

- Delphine Demange, Semantic Foundations of Intermediate Program Representations

- Mukul Rathi, A Complete Guide to LLVM for Programming Language Creators

- Andrew Appel, SSA is Functional Programming

Navigation Menu

Search code, repositories, users, issues, pull requests..., provide feedback.

We read every piece of feedback, and take your input very seriously.

Saved searches

Use saved searches to filter your results more quickly.

To see all available qualifiers, see our documentation .

- Notifications You must be signed in to change notification settings

SSA Tutorial

haotianmichael/ssa-compiler-book

Folders and files.

| Name | Name | |||

|---|---|---|---|---|

| 6 Commits | ||||

| ocaml | ocaml | |||

Repository files navigation

Ssa-compiler-book, 0. introduction.

Static single assignment, known as SSA , was once developed at IBM Research. Because of its fast and powerful abilities on optimizations, most of the current commercial and open-source compilers, including LLVM , GCC , HotSpot , V8 engine , use SSA as a key IR for program analysis and other usages. On the other hand, research about Intermediate Representation such as MLIR became more mature.

This book aims to introduce SSA and explore related techniques in compiler field such program analysis and MLIR.

1. Prerequisites

As prerequisites, you need to know:

- functional languages , (Ocaml is good)

- static program analysis , (Dataflow and fixed point)

- compilers ,(Implementations and usages of IR and CodeGen )

2. TextBook

We use the well-known ssa-book as textbook to learn SSA . Here is my learning notes in Chinese.

3. Advanced

TODO: LLVM IR, MLIR, Relay...

3.2. Projects

TODO: Ocaml, Cpp...

- OCaml 100.0%

IMAGES

VIDEO

COMMENTS

Static single-assignment form. In compiler design, static single assignment form (often abbreviated as SSA form or simply SSA) is a type of intermediate representation (IR) where each variable is assigned exactly once. SSA is used in most high-quality optimizing compilers for imperative languages, including LLVM, the GNU Compiler Collection ...

Static Single Assignment was presented in 1988 by Barry K. Rosen, Mark N, Wegman, and F. Kenneth Zadeck.. In compiler design, Static Single Assignment ( shortened SSA) is a means of structuring the IR (intermediate representation) such that every variable is allotted a value only once and every variable is defined before it's use. The prime use of SSA is it simplifies and improves the ...

Wrong! Many compilers convert programs into static single assignment (SSA) form, which does exactly what it says: it ensures that, globally, every variable has exactly one static assignment location. (Of course, that statement might be executed multiple times, which is why it's not dynamic single assignment.) In Bril terms, we convert a ...

SSA form. Static single-assignment form arranges for every value computed by a program to have. aa unique assignment (aka, "definition") A procedure is in SSA form if every variable has (statically) exactly one definition. SSA form simplifies several important optimizations, including various forms of redundancy elimination. Example.

Computing Static Single Assignment (SSA) Form Overview •What is SSA? •Advantages of SSA over use-def chains •"Flavors" of SSA •Dominance frontier •Control dependence •Inserting φ-nodes •Renaming the variables •Translating out of SSA form R. Cytron, J. Ferrante, B. K. Rosen, M. N. Wegman,

•Static Single Assignment (SSA) •CFGs but with immutable variables •Plus a slight "hack" to make graphs work out •Now widely used (e.g., LLVM) •Intra-procedural representation only •An SSA representation for whole program is possible (i.e., each global variable and memory location has static single

SSA. Static single assignment is an IR where every variable is assigned a value at most once in the program text. E as y for a b asi c bl ock : assign to a fresh variable at each stmt. each use uses the most recently defined var. (Si mil ar to V al ue N umb eri ng) Straight-line SSA. . + y.

•Add SSA edges from definitions to uses •No intervening statements define variable •Safe to propagate facts about x only along SSA edges Example: SSA x 1:= 3 y 1:= a 1 + b 1 z 1 ... •Computing static single assignment form •Computing control dependencies •Identify (natural) loops in CFG

Static Single Assignment Form L11.7 into variables b 1, e 1, and r 1. That fact that labeled jumps correspond to moving values from arguments to label parameters will be the essence of how to generate assembly code from the SSA intermediate form in Section7. 4 SSA and Functional Programs

Why SSA? Static Single Assignment Advantages: Dataflow analysis and code optimization made simpler. — Variables have only one definition - no ambiguity. — Dominator information is encoded in the assignments. Less space required to represent def-use chains. For each variable, space is propor- tional to uses * defs.

Static Single Assignment (SSA) • Static single assignment is an IR where every variable is assigned a value at most once in the program text • Easy for a basic block (reminiscent of Value Numbering): -Visit each instruction in program order: •LHS: assign to a fresh version of the variable •RHS: use the most recent version of each variable

In Static Single Assignment (SSA) Form each assignment to a variable, v, is changed into a unique assignment to new variable, v i. If variable v has n assignments to it throughout the program, then (at least) n new variables, v 1 to v n, are created to replace v. All uses of v are

By popular demand, I'm doing another LLVM post. This time, it's single static assignment (or SSA) form, a common feature in the intermediate representations of optimizing compilers. Like the last one, SSA is a topic in compiler and IR design that I mostly understand but could benefit from some self-guided education on.

13.3 Static Single Assignment. ¶. Most of the tree optimizers rely on the data flow information provided by the Static Single Assignment (SSA) form. We implement the SSA form as described in R. Cytron, J. Ferrante, B. Rosen, M. Wegman, and K. Zadeck. Efficiently Computing Static Single Assignment Form and the Control Dependence Graph.

Thirty years later, the Static Single Assign-ment (SSA) form was introduced by Alpern, Rosen, Wegman and Zadeck as a tool for efficient optimization in a pair of POPL papers [2, 35], and three years after that Cytron and Ferrante joined Rosen, Wegman, and Zadeck in explaining how to compute SSA form efficiently in what has since be- ...

If the program has this form, called static single assignment (SSA), then we can perform constant propagation immediately in the example above without further checks. There are other program analyses and op- ... Static Single Assignment Form L6.2 2 Basic Blocks A basic block is a sequence of instructions with one entry point and one exit point.

This book provides readers with a single-source reference to static-single assignment (SSA)-based compiler design. It is the first (and up to now only) book that covers. in a deep and comprehensive way how an optimizing compiler can be designed using. the SSA form. After introducing vanilla SSA and its main properties, the authors

Static single-assignment form (ssa) is a naming discipline that many modern compilers use to encode information about both the flow of control and the flow of data values in the program. In ssa form, names correspond uniquely to specific definition points in the code; each name is defined by one operation, hence the name static single ...

Wrong! Many compilers convert programs into static single assignment (SSA) form, which does exactly what it says: it ensures that, globally, every variable has exactly one static assignment location. (Of course, that statement might be executed multiple times, which is why it's not dynamic single assignment.) In Bril terms, we convert a program ...

Static Single Assignment Book Wednesday 30th May, 2018 16:12. 2 ... Part I Vanilla SSA 1 Introduction — (J. Singer)..... 5 1.1 Definition of SSA 1.2 Informal semantics of SSA ... 10.1 Static single information form 10.2 Control dependencies 10.3 Gated-SSA forms 10.4 Psi-SSA form 10.5 Array SSA form

Static single assignment (SSA) is one of the most popular intermediate representations out there. Stalwarts of compiler toolchains, both GCC and LLVM, use this form as IR for multiple languages. SSA introduces the restriction that each variable is assigned only once and never change the values. If a variable is modified in the original program ...

The static single-assignment (SSA) form is a program representation in which variables are split into "instances." Every new assignment to a variable — or more generally, every new definition of a variable — results in a new instance. The variable instances are numbered so that each use of a variable may be easily

Static single assignment, known as SSA, was once developed at IBM Research.Because of its fast and powerful abilities on optimizations, most of the current commercial and open-source compilers, including LLVM, GCC, HotSpot, V8 engine, use SSA as a key IR for program analysis and other usages. On the other hand, research about Intermediate Representation such as MLIR became more mature.